- →Introduction: Your VM Model Worked Great in Testing — So Why Is It Failing in Production?

- →Pitfall 1: Training Data Bias — Building Models on "Golden Wafers" Only

- →Pitfall 2: Missing Input Features — Starving the Model of Context

- →Pitfall 3: Post-PM Model Drift — The Chamber Changed, But the Model Did Not

- →Pitfall 4: Chamber-to-Chamber Variation — One Model Does Not Fit All

Key Takeaways

Over 70% of Virtual Metrology deployments experience significant accuracy degradation within 3 months of going live. The root causes are not algorithmic — they are systemic issues in training data bias, missing features, post-PM model drift, chamber-to-chamber variation, and sensor calibration decay. With automated model refresh mechanisms like those in NeuroBox E3200, production VM systems can maintain MAPE below 3% and R² above 0.92 long-term. Source: Moore Solution Technology

Introduction: Your VM Model Worked Great in Testing — So Why Is It Failing in Production?

If you already understand the fundamentals of Virtual Metrology, you know the promise: use equipment process data to predict wafer quality in real time, reduce physical metrology sampling, and accelerate fab throughput.

But here is what actually happens in most fabs: the VM model delivers impressive results during offline validation, then steadily loses accuracy once deployed on the production line. MAPE creeps from 2% to 8%. R² slides from 0.95 to 0.70. Process engineers lose trust, and the model quietly gets abandoned.

This is not an edge case — it is the norm. Industry experience suggests that more than 70% of VM projects follow this “peak at launch” trajectory. The algorithms are rarely the problem. XGBoost, LSTM, Random Forest — they all produce strong offline metrics. The real killers are five systemic pitfalls hidden in the data pipeline, feature engineering, and operational maintenance.

This article dissects each pitfall in detail: what it looks like, how to diagnose it, and how to fix it. Whether you are deploying your first VM model or troubleshooting an existing one, this is the practical guide to making VM actually work in production.

Pitfall 1: Training Data Bias — Building Models on “Golden Wafers” Only

Symptoms

The model achieves R² > 0.95 on the test set but shows systematic prediction bias once deployed. Predictions cluster around “normal” values even when the process is clearly drifting. When particle counts spike or temperature shifts occur, the model fails to reflect these abnormalities — it keeps predicting as if everything is fine.

Root Cause

The training dataset suffers from selection bias. The most common mistake: engineers export only “good wafer” data from the MES, filtering out all OOS (Out of Spec) results and edge cases. The model has only ever seen the ideal operating envelope and has zero knowledge of abnormal process states.

A related variant is temporal bias: training on data from only the most recent 2-3 weeks, which happen to be the “honeymoon period” right after a PM event when the chamber is in pristine condition. The model never learns what a degraded chamber looks like.

Diagnosis

- Compare the distribution of training data vs. actual production data for key FDC parameters (RF power, gas flow, pressure). If the training distribution is narrower, you have selection bias.

- Check the proportion of OOS samples in your training set. If it is significantly lower than the actual production defect rate, the data has been over-cleaned.

- Run Kolmogorov-Smirnov tests between training and production feature distributions. A p-value below 0.05 indicates distribution mismatch.

Fix

- Expand data coverage: Include at least 3 complete PM cycles in training data, capturing the full equipment lifecycle from fresh chamber to degraded state.

- Retain abnormal samples: Keep 5-10% of boundary and OOS samples. The model needs to learn what “bad” looks like to correctly predict it.

- Use stratified sampling: Ensure balanced representation of different equipment states — post-PM ramp, steady state, and pre-PM degradation.

- Implement distribution drift detection: Continuously compare incoming production data against training distributions and trigger retraining when divergence exceeds a threshold.

Pitfall 2: Missing Input Features — Starving the Model of Context

Symptoms

Predictions are acceptable most of the time but show periodic, patterned deviations. For example, prediction errors spike every time the upstream process switches recipes. Or prediction bias gradually increases as the wafer count since the last PM grows — the model cannot “see” that the chamber is aging.

Root Cause

Most VM models are built using only the FDC data from the current process step — RF power, pressure, temperature profiles, gas flows. But the final metrology result (film thickness, CD, uniformity) is influenced by much more than just the current step:

- Upstream process data: CD variation from the previous lithography step, surface condition after the preceding clean step — these directly affect current-step outcomes.

- Equipment cumulative state: Wafer count since last PM, accumulated RF hours, cumulative deposition thickness — all indicators of chamber degradation that are invisible in single-wafer FDC data.

- PM cycle position: Where the equipment sits in its maintenance cycle fundamentally changes the FDC-to-metrology mapping.

- Environmental variables: Cleanroom temperature/humidity fluctuations, bulk gas purity, cooling water temperature — these vary seasonally and shift process baselines.

Diagnosis

- Perform residual analysis: Plot prediction residuals (actual minus predicted) against time, upstream parameters, and equipment state variables.

- If residuals correlate with “wafer count since PM” (correlation coefficient > 0.3), chamber degradation features are missing.

- If residuals show step-changes aligned with upstream recipe switches, upstream features are needed.

- Run SHAP analysis on existing features. If many features have near-zero SHAP values, the truly important variables are probably not in the model.

Fix

- Build a multi-source feature pipeline: Integrate FDC, EES (Equipment Engineering System), MES, and upstream metrology data into a unified feature vector.

- Essential features to include:

- Wafer count since last PM / wet clean / season PM

- Accumulated RF hours

- Previous step metrology values or VM predictions

- Wafer slot position in the cassette (slot effect)

- Cleanroom environmental readings

- Validate with feature importance: Use Permutation Importance or SHAP to confirm new features actually contribute predictive power.

- Automate the feature pipeline: Ensure all context data flows into the real-time inference path, not just the offline training environment.

Pitfall 3: Post-PM Model Drift — The Chamber Changed, But the Model Did Not

Symptoms

The VM model runs well before PM, but prediction errors spike sharply in the first 50-100 wafers after PM completion. MAPE jumps from 3% to over 10%. Errors gradually decrease but may take hundreds or even thousands of wafers to return to pre-PM levels.

Root Cause

Preventive Maintenance is a hard reset of chamber state. Consumable parts are replaced (showerhead, edge ring, ESC), the chamber is cleaned, and conditioning runs re-establish baseline. The physical characteristics of the chamber change fundamentally:

- A new showerhead has different gas hole distribution — flow uniformity changes.

- The chamber wall coating profile returns to its initial state after cleaning.

- The RF matching network may be retuned.

The consequence: identical FDC setpoints (e.g., 1000W RF power) produce different process outcomes before and after PM. The FDC-to-metrology mapping the model learned is no longer valid.

Diagnosis

- Mark all PM events on a time-series plot of VM prediction error. Look for pulse-like error spikes immediately after each PM.

- Compare FDC feature distributions before and after PM for the same recipe. Even with identical setpoints, actual RF reflected power, pressure stability, and temperature ramp rates typically shift.

- Measure the “recovery time” — how many wafers after PM until MAPE returns below the acceptable threshold.

Fix

- PM-event-triggered model switching: Maintain separate model parameters for “post-PM state” and “steady state.” Automatically switch to the post-PM model when a PM event is detected.

- Rapid online learning: Use the first 20-30 wafers of actual metrology data after PM to fine-tune the model, quickly adapting to the new chamber state.

- Incremental training: Rather than retraining from scratch, perform incremental updates on the existing model — preserving historical knowledge while adapting to the new state.

- Automatic metrology densification post-PM: Mandate 100% inspection for the first 100 wafers after PM. This serves dual purposes: quality assurance and providing labeled data for model updates.

This is a core design principle of NeuroBox E3200’s automatic model refresh mechanism. The system automatically detects PM events from equipment logs, initiates a rapid adaptation workflow during the conditioning phase, and compresses the “recovery period” from hundreds of wafers to under 30.

Pitfall 4: Chamber-to-Chamber Variation — One Model Does Not Fit All

Symptoms

A model trained on Chamber A delivers excellent results (R² > 0.95), but when deployed to Chamber B of the same tool type running identical recipes, R² drops to 0.6-0.7 with systematic prediction offset. The same model shows drastically different error profiles across chambers.

Root Cause

No two chambers are identical — this is a fundamental reality of semiconductor manufacturing. Even same-model, same-vintage equipment exhibits differences:

- Mechanical tolerances: Showerhead-to-wafer gap can vary by 0.5-1mm across chambers.

- Consumable state: Different chambers have different PM schedules and consumable wear profiles.

- Sensor bias: Same-model pressure gauges can differ by 1-2% in absolute reading across chambers.

- Process history: Different chambers have run different recipe mixes, resulting in different chamber wall coating profiles.

These differences mean that identical FDC setpoints produce different process outcomes on different chambers. The FDC-to-metrology mapping learned from Chamber A simply does not hold for Chamber B.

Diagnosis

- Compare FDC feature distributions across chambers for the same recipe — focus on mean shifts and variance differences.

- Add Chamber ID as a categorical feature and measure its SHAP value. If Chamber ID ranks among the top features, cross-chamber variation is significant.

- Calculate MAPE separately for each chamber. If the ratio between the worst and best chamber MAPE exceeds 2x, the problem is severe.

Fix

- Chamber-specific models: Train and maintain a separate model for each chamber. This is the most effective approach, though it increases operational overhead.

- Transfer learning: Train a base model on pooled data from all chambers, then fine-tune with a small amount of chamber-specific data. This reduces per-chamber data requirements by 60-80%.

- Chamber matching (statistical alignment): Use normalization techniques (Z-score, quantile matching) to align FDC distributions across chambers before feeding them into a unified model.

- Chamber-aware features: Include Chamber ID, PM history, and accumulated run time as additional model inputs, letting the algorithm learn the inter-chamber offsets.

Pitfall 5: Sensor Drift and Calibration Decay — When the Input Data Itself Is Wrong

Symptoms

Prediction error increases slowly and continuously — not a sudden jump, but a gradual creep. Week by week, MAPE ticks up by 0.2-0.3%. After three months, it has silently grown from 2% to 6%. The trend temporarily improves after PM (because sensors get recalibrated) but resumes its upward march shortly after.

Root Cause

VM model inputs come from equipment sensors — pressure gauges, temperature sensors, RF power meters, mass flow controllers. Every sensor degrades over time:

- Pressure gauges: Capacitance diaphragm deforms from plasma contamination and thermal cycling, causing progressive reading drift.

- Thermocouples: Junction oxidation and material degradation increase measurement error over time.

- Mass Flow Controllers (MFCs): Internal valve wear increases the gap between actual flow and setpoint.

- RF power meters: Directional coupler insertion loss changes with aging.

The danger of sensor drift is that it is slow and continuous. It does not trip equipment alarms (which typically only fire at hard limits), but it steadily corrupts the model’s input data. The mathematical relationships in the model are intact, but the numbers being fed in no longer represent the true physical state.

Diagnosis

- Monitor long-term trends for each sensor reading under the same recipe. A 0.1% weekly drift in a sensor mean adds up to 1.2% over three months.

- Perform sensor cross-validation: Compare primary and backup sensor readings, or use physical laws (e.g., PV=nRT) to cross-check consistency between pressure, temperature, and flow.

- Calculate the rolling correlation between VM prediction residuals and individual sensor readings. A steadily increasing correlation with a particular sensor indicates that sensor is drifting.

Fix

- Sensor health monitoring: Track SPC control charts for every critical sensor — monitor trends, not just limit violations.

- Scheduled sensor calibration: Define calibration intervals by sensor type (e.g., pressure gauges every 3 months, thermocouples every 6 months). Trigger VM model fine-tuning after each calibration event.

- Input data quality gating: Add a data quality check layer before VM inference. If any sensor reading deviates from its baseline beyond a threshold, flag the prediction as “low confidence.”

- Robust modeling: Use model architectures that are less sensitive to input noise — neural networks with dropout, Huber loss functions, or ensemble methods that average out sensor errors.

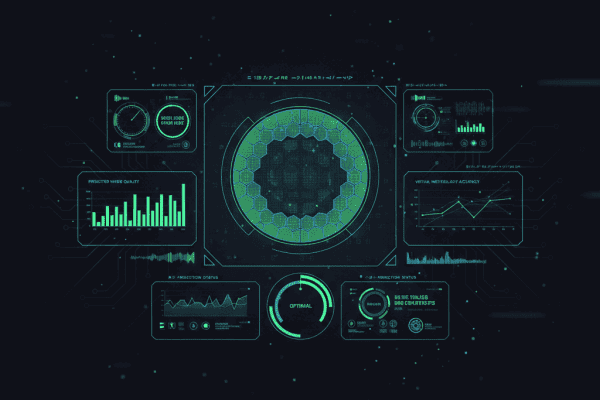

Monitoring VM Accuracy in Production: A Practical Framework

Identifying pitfalls is step one. Continuous monitoring is what makes VM reliable over the long term. Here is a field-proven monitoring framework.

Core Metrics

| Metric | Calculation | Green Threshold | Red Threshold |

|---|---|---|---|

| R² | Rolling 100-wafer window | > 0.90 | < 0.80 |

| MAPE | Rolling 100-wafer window | < 3% | > 5% |

| Residual Mean | Rolling 50-wafer mean | |mean| < 1 sigma | |mean| > 2 sigma |

| Prediction Coverage | % of actuals within 95% PI | > 93% | < 88% |

Alert and Auto-Response Protocol

- Yellow alert (Warning): MAPE exceeds 3% for 3 consecutive windows. Action: Notify engineers, increase physical metrology sampling rate.

- Red alert (Alarm): MAPE exceeds 5% or R² falls below 0.80. Action: Automatically revert to 100% physical inspection; initiate model retraining.

- PM event trigger: PM completion detected. Action: Switch to post-PM model, activate rapid learning protocol.

NeuroBox E3200’s Automated Refresh Approach

The traditional approach waits until accuracy has clearly degraded before manually retraining — meaning inaccurate predictions have already been applied to hundreds of production wafers. NeuroBox E3200 takes a fundamentally different approach:

- Per-wafer confidence scoring: Every single prediction includes a real-time confidence interval, not just a point estimate. No waiting for batch statistics.

- Predictive refresh: By monitoring input feature distribution drift trends, the system triggers model fine-tuning before accuracy visibly degrades — fixing the problem before it becomes one.

- PM-aware adaptation: Automatic integration with equipment PM logs. When a PM event occurs, the system immediately initiates a dedicated rapid adaptation workflow.

- Closed-loop automation: Data collection, feature engineering, model inference, accuracy monitoring, and automatic retraining form a fully autonomous loop — no manual intervention required.

Conclusion: The Roadmap from “Inaccurate” to “Reliably Accurate”

VM prediction inaccuracy is not a failure of VM technology — it is a failure of engineering implementation. Get these five things right, and your VM system transforms from a promising demo into a production-critical tool:

- Representative training data: Cover full PM cycles, retain abnormal samples, implement distribution monitoring.

- Complete feature sets: FDC alone is not enough. Add equipment cumulative state, upstream data, and environmental variables.

- Rapid post-PM adaptation: Event-triggered model switching with online learning. Recover in 30 wafers, not 300.

- Chamber-specific attention: Never assume one model works across all chambers. Adapt, align, or build dedicated models.

- Sensor health discipline: Scheduled calibration, continuous trend monitoring, input quality gating.

If you are struggling with VM accuracy or planning a new VM deployment and want to avoid these pitfalls, explore how NeuroBox E3200’s automated VM platform keeps models accurate with zero manual intervention — so your engineers can focus on process optimization, not model babysitting.

NeuroBox E3200 replaces metrology wait with real-time VM prediction. Control parameters auto-adapt based on prediction confidence. No manual lambda tuning.

Book a Demo →Frequently Asked Questions

What is the most common cause of VM accuracy degradation?

What R² and MAPE should a production VM model achieve?

How do I monitor VM accuracy in production?

See how NeuroBox reduces trial wafers by 80%

From Smart DOE to real-time VM/R2R — our AI runs on your equipment, not in the cloud.

Book a Demo →