- →The five failure modes of generic AI content tools

- →What B2B content actually needs

- →Comparison: prompt wrappers vs AI virtual CMO architecture

- →The Jasper-was-fine-in-2023 trap

- →What an AI virtual CMO does on day one

If your B2B content from Jasper or Copy.ai sounds like an enthusiastic intern who has never met a customer, that is not a prompt problem. That is an architecture problem. Most ai content tools for b2b are built as prompt wrappers around general-purpose LLMs. They have no idea what your product does, who your customers are, or how your category actually talks. They produce prose. B2B does not need prose. It needs positioning.

I have audited content from roughly 30 B2B SaaS companies in the last 18 months. The pattern is consistent: generic AI tools work fine for blog filler and social captions, then collapse the moment you ask them to write something a buyer would actually read before a $50k deal. Here is exactly where they break, and what an AI virtual CMO architecture has to do instead to not be a toy.

The five failure modes of generic AI content tools

I rank these by how often they kill a content program.

Smart DOE reduces trial wafers by 80%. See how it works for your equipment.

Choose how you'd like to connect:

1. No brand corpus, so every article reads like every other tool

Take any Series B SaaS. Their actual voice lives in 200+ customer call transcripts, 40 sales decks, the founder’s LinkedIn archive, the product docs, and a handful of internal Notion pages. A prompt-only tool sees none of that. So you get sentences like “In today’s competitive landscape, businesses must leverage cutting-edge solutions.” That sentence has been written 4 million times. It will not rank, will not get shared, and will not convert.

2. No product knowledge graph, so technical claims drift

My favorite failure: an AI tool wrote a comparison page claiming a fintech client supported “real-time multi-currency settlement.” They do not. They never have. Their CEO had to issue a correction. This is not a hallucination edge case. It is the default behavior when you ask an LLM to write about a product it has no grounded knowledge of. Generic ai content tools for b2b have no integration with your product roadmap, feature flags, or pricing. They guess. They guess wrong about 40 to 60 percent of the time on technical specifics.

3. No customer voice, so it never quotes anyone real

The best B2B content always has at least one customer phrase that sounds like it came from an actual call: “we kept getting paged at 3 a.m. for failed reconciliations.” That phrase is not in any LLM’s training set. It is in your Gong recordings. If your content tool cannot pull from there, your articles will never have the texture that builds trust.

4. One platform, one format, so distribution is broken

Most AI tools generate a blog post, then leave you to manually adapt it for LinkedIn, X, Medium, and Reddit. In practice, that adaptation never happens. So the article gets 40 organic visits in month one, dies, and you blame the tool. The actual failure was distribution, not generation.

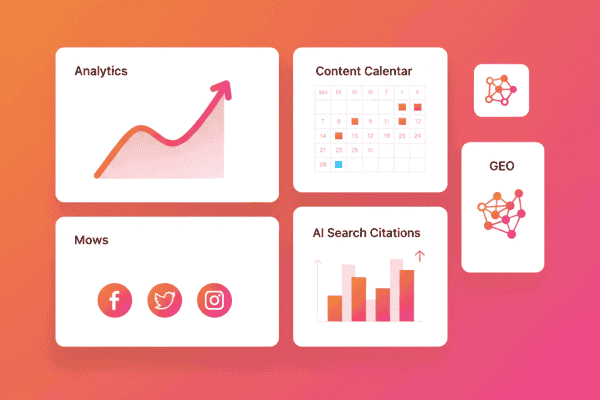

5. No performance loop, so it cannot learn

Generic tools generate. They do not read SERPs, they do not check whether your article got cited by Perplexity, they do not see which LinkedIn post under your name hit 80k impressions. They are write-only. A real ai virtual cmo system is read-write: it pulls performance signals, attributes them to specific structural choices, and adjusts.

What B2B content actually needs

B2B content has a job. The job is to convince a skeptical, technical buyer that you understand their problem, that your product is credibly differentiated, and that you are safe to choose. To do that job, an AI system has to do four things, none of which a prompt-only tool does well.

Brand corpus retrieval (RAG over your actual writing)

You ingest your top-performing past content, your founder’s LinkedIn archive, your sales calls, and your product docs. Every new article retrieves from this corpus and grounds claims in it. The output starts to sound like you, not like a generic LinkedIn ghost. This is the single highest-leverage architectural decision in B2B AI content.

Voice fingerprinting at the founder layer

Your CEO’s LinkedIn voice is not your company blog voice. Both are valid. Both should be modeled separately. A real system maintains 3 to 5 distinct voice profiles (founder, technical, marketing, customer-success-led) and routes content to the right one. Generic tools have one voice: blandly enthusiastic.

Platform-specific formatters, not copy-paste

A LinkedIn post is not a paragraph from your blog. A Reddit reply that works in r/devops will get downvoted to oblivion in r/marketing. The system has to understand the cultural and structural rules of each platform. BlogBurst built its publishing layer around this exact problem because we kept watching teams burn out on manual repurposing.

Structured fact-checking

Before any article ships, claims are verified against three sources: your product knowledge graph (does the feature exist?), public sources (is the statistic real?), and your prior content (have we said something contradictory?). Generic tools skip all three.

Comparison: prompt wrappers vs AI virtual CMO architecture

| Capability | Prompt Wrapper (Jasper / Copy.ai) | AI Virtual CMO (BlogBurst-style) |

|---|---|---|

| Brand corpus grounding | None or shallow | Full RAG over your library |

| Product knowledge | LLM guess | Structured product graph |

| Customer voice | None | Pulled from call transcripts and CRM |

| Platforms supported | One (blog) | 6 to 9, with native formatters |

| Fact-checking | None | Multi-source verification before publish |

| Performance loop | None | SERP and engagement feedback |

| Voice profiles | One | Per-author and per-platform |

| Programmatic SEO | Manual | Templated with data injection |

The Jasper-was-fine-in-2023 trap

A lot of marketers tell me “we tried Jasper, it was okay, but the output got stale.” That is the trap. Generic AI content tools for b2b were genuinely useful from 2022 to 2023 when LLMs were a novel competitive advantage. By 2024 every competitor had the same tool, so the differentiation collapsed. By 2026, if your AI is not architecturally specific to your business, you are publishing into a sea of identical content. The marginal value of better prompts on a generic tool is now near zero.

What an AI virtual CMO does on day one

A serious deployment looks like this:

- Week 1: Ingest 200+ pieces of your existing content, customer call transcripts, product docs, and ICP descriptions. Build the corpus.

- Week 1-2: Define 3 to 5 voice profiles and content pillars. Validate outputs against your top-performing historical articles.

- Week 2-4: Ramp from 5 articles per week to 15 to 20, with human editor review on titles and 30 percent of bodies.

- Month 2: Performance loop kicks in. Articles that win get more variants. Losers get cut.

- Month 3: Programmatic and multi-platform layer comes online. Volume scales without quality drop.

This is not a configuration of Jasper. It is a different category of product.

Failure modes even good systems have

No platform survives a customer who refuses to feed it data. The most common failure I see is teams that buy an AI virtual CMO, then never upload their corpus, never connect their CRM, and then complain it sounds generic. It will. The system is a function of its inputs. If you treat it like a vending machine, you get vending-machine output.

The second failure: founders who insist on shipping every piece untouched. The right ratio is roughly 70 percent ship-as-is, 30 percent human editor passes. That last 30 percent is what keeps the brand voice from drifting over 6 months of compounding.

A real teardown of an AI-generated B2B article gone wrong

Let me walk through a specific failure I audited recently. The client is a Series B fintech infrastructure SaaS. They had been using a popular generic AI content tool for 8 months and could not figure out why their organic was flat despite publishing 4 articles per week.

The article in question targeted the keyword “payment reconciliation automation.” The piece ranked at position 47 after 90 days. Here is what we found in the teardown:

- The article opened with a 250-word generic introduction about “the importance of automation in financial operations.” Cut it. Their actual hook was buried in paragraph 4.

- The product description was wrong on three specifics. The article claimed the product supported “multi-currency reconciliation across 50 currencies.” They support 22. Generic AI hallucinated the bigger number because it sounded more impressive.

- Zero customer voice. Their CRM had 80 calls mentioning “reconciliation pain.” None of that texture made it into the article.

- The competition section listed two competitors that had been acquired and shut down. The model was working from outdated training data with no retrieval.

- Identical structure to 30 prior articles in the same library. H2 hierarchy, transition phrases, conclusion structure, all templated.

We rewrote the article with proper corpus retrieval, fact-checked every claim, and added two anonymized customer phrases pulled from real calls. The rewrite took 90 minutes of AI generation and 30 minutes of human review. It now ranks at position 6 and is cited by Perplexity for queries on its target topic.

This is the gap between prompt-wrapper output and architecture-grounded output. Same word count, same target keyword, completely different commercial result.

What good B2B AI content actually feels like to read

If you read a piece and cannot tell whether a human or AI wrote it, the architecture is doing its job. The signals to look for in your own output:

- Specific numbers from your own data, not generic stats from “a recent study.”

- Customer phrases that sound like real customer phrases, with the awkwardness intact.

- Opinions that take a side and might lose readers who disagree.

- Failure modes acknowledged openly, including for your own product.

- Concrete examples from named or anonymized real situations, not hypotheticals.

If your AI content has none of these, the system is set up wrong. If it has all of these, it is doing what the best human writers do, with 10x throughput.

What to actually do this week

- Audit your last 20 AI-generated articles for factual claims that are wrong about your own product. Count them.

- Export 50 of your best-performing past articles and 20 customer call transcripts. That is the minimum viable corpus.

- Define 3 voice profiles (founder, company, technical) with 5 example sentences each.

- Trial one AI virtual CMO platform that does corpus retrieval, not just prompts. BlogBurst is one option, there are others. Just stop using prompt wrappers for serious B2B content.

NeuroBox covers the full lifecycle: design automation, Smart DOE commissioning, and real-time production AI.

Explore Solutions →See how NeuroBox reduces trial wafers by 80%

From Smart DOE to real-time VM/R2R — our AI runs on your equipment, not in the cloud.

Book a Demo →