- →Why Does Design Review Still Miss So Many Errors Despite Rigorous Processes?

- →What Types of Errors Can AI Catch That Human Reviewers Struggle With?

- →How Does AI-Assisted Review Change the Reviewer Workflow?

- →What Role Does Drawing Comparison Play in Multi-Revision Projects?

- →How Should Companies Implement AI-Assisted Design Review?

Key Takeaway

Traditional design review catches 78-85% of errors but consumes 15-22% of total project engineering time. AI-assisted design review using automated comparison, rule checking, and anomaly detection increases error detection rates to 94-97% while reducing review time by 60-70%. The highest-value application is catching cross-disciplinary errors and revision synchronization failures that human reviewers consistently miss.

Why Does Design Review Still Miss So Many Errors Despite Rigorous Processes?

Design review is the last line of defense before engineering drawings are released to procurement and the shop floor. In semiconductor equipment companies, design review typically involves 2-4 senior engineers examining a design package over 2-5 days, checking for specification compliance, spatial feasibility, safety code adherence, and manufacturability. Companies invest heavily in review processes because the cost of catching an error during review ($800-2,500) is orders of magnitude less than catching it during assembly ($5,000-30,000) or at the customer site ($40,000-180,000).

See how AI transforms semiconductor design and manufacturing — from the equipment up.

Choose how you'd like to connect:

Yet despite this investment, industry data shows that manual review catches only 78-85% of design errors. The remaining 15-22% propagate downstream, driving the rework costs that consume 23-31% of engineering budgets across the industry. Understanding why errors survive review is essential to improving the process.

Attention fatigue. Reviewing a 200-component gas panel assembly involves checking thousands of individual dimensions, clearances, specifications, and relationships. Human attention degrades measurably after 45-60 minutes of concentrated review work. Studies of engineering inspection tasks show that error detection rates drop by 25-35% in the second hour compared to the first hour of continuous review.

Confirmation bias. Reviewers who know the designer tend to trust their work and may unconsciously reduce scrutiny in areas where the designer is known to be strong. Conversely, they may over-focus on known weaknesses and miss errors in areas assumed to be well-handled.

Cross-disciplinary blind spots. A mechanical reviewer checks mechanical constraints. A process reviewer checks process requirements. But errors that span disciplines, such as a valve specification that is mechanically correct but process-incompatible, fall in the gap between reviewers responsibilities. These cross-disciplinary errors account for approximately 35% of review escapes.

Revision synchronization failures. When a design undergoes multiple revisions during the review process, each revision can introduce new errors while fixing old ones. Tracking what changed between revisions is manually intensive and error-prone. Approximately 28% of review escapes are revision-related errors: changes that were correctly implemented on one drawing but not propagated to related drawings.

What Types of Errors Can AI Catch That Human Reviewers Struggle With?

AI-assisted design review is not about replacing human judgment. It is about augmenting human reviewers with capabilities that address the specific failure modes of manual review:

Exhaustive dimensional checking. AI can verify every dimension, clearance, and tolerance in a design package against specified requirements without fatigue. For a 200-component assembly, this means checking 2,000-4,000 individual dimensional relationships, each compared against the applicable standard or specification. A human reviewer performing spot checks might verify 200-400 of these; the AI checks all of them.

Automated revision comparison. This is where tools like DrawingDiff provide exceptional value. When a design is revised, DrawingDiff automatically identifies every change between the old and new versions, classifying each as a geometry change, a dimension change, a note or annotation change, or a BOM change. The comparison is pixel-accurate for drawings and geometry-accurate for 3D models, catching changes that a human reviewer comparing two drawings side-by-side would miss.

Consider a practical example: a designer revises a gas panel layout, moving a pressure transducer 15mm to accommodate a larger valve actuator. This change also requires updating the tubing route to the transducer, the support bracket location, and the panel face cutout position. DrawingDiff identifies all four changes and flags whether each has been implemented. If the tubing route was updated but the support bracket was not, the tool highlights this incomplete propagation before the design is released.

Standards compliance verification. SEMI S2 alone contains hundreds of specific design requirements for semiconductor equipment. Encoding these as automated checks allows every design to be systematically verified against the full standard, not just the requirements that the reviewer remembers to check. Companies report that automated SEMI S2 checking identifies an average of 3.2 compliance issues per project that were missed by manual review.

Cross-reference validation. AI can verify consistency across the entire design package: that BOM quantities match the 3D model, that drawing dimensions match the model geometry, that instrument tags on P and IDs match the instrument specification database, and that cable schedules match the electrical schematic. These cross-reference checks involve comparing data across multiple documents and databases, a task that is tedious and error-prone for humans but straightforward for software.

How Does AI-Assisted Review Change the Reviewer Workflow?

The most effective implementations of AI-assisted review do not eliminate human reviewers. They restructure the review workflow to focus human attention where it adds the most value:

Pre-review automated scan (10-30 minutes). Before human reviewers begin their work, the AI performs a comprehensive automated scan covering dimensional verification, clearance checking, standards compliance, BOM cross-referencing, and revision comparison. The output is a prioritized list of findings categorized by severity (critical, major, minor) and type (safety, compliance, manufacturing, documentation).

Focused human review (reduced from 16-40 hours to 6-14 hours). Human reviewers receive the AI findings report and focus their expertise on three areas: evaluating the AI-flagged issues (confirming whether they are true errors or false positives), performing judgment-intensive checks that AI cannot handle (assessing overall design elegance, evaluating novel approaches, considering customer-specific preferences), and reviewing areas where the AI reported low confidence, indicating complex or ambiguous design elements.

AI-assisted markup tracking. As reviewers mark up issues, the AI tracks which issues have been addressed in subsequent revisions and which remain open. This eliminates the common problem of review findings being lost or forgotten during the revision cycle.

Data from companies using this workflow shows a significant improvement in review effectiveness. A Korean equipment OEM measured review outcomes across 34 projects before and after implementing AI-assisted review:

Before AI: Average review time 28 hours, error detection rate 81%, average errors reaching assembly 8.4 per project.

After AI: Average review time 11 hours, error detection rate 96%, average errors reaching assembly 1.7 per project.

The reduction in review time combined with the improvement in detection rate created both a productivity gain and a quality gain, a combination that is rare in engineering process improvements where quality and speed typically trade off against each other.

What Role Does Drawing Comparison Play in Multi-Revision Projects?

Semiconductor equipment designs undergo an average of 4.7 revisions between initial release and final manufacturing approval. Each revision cycle involves changes driven by customer feedback, internal review findings, procurement-driven substitutions, and manufacturing engineering input. Managing these revisions is a significant source of both engineering time and errors.

DrawingDiff addresses this specifically by providing automated, comprehensive comparison between any two revisions of a design document. The capabilities include:

Geometry overlay comparison. Two versions of a drawing or 3D model are overlaid and every difference is identified, highlighted, and categorized. Moved components, resized features, modified routing paths, and added or removed elements are all detected and presented in a clear visual format.

Annotation and dimension comparison. Changes to dimensions, notes, surface finish symbols, weld callouts, and other annotations are identified separately from geometry changes. This is important because annotation changes often indicate specification changes that have implications beyond the local geometry.

BOM delta analysis. Changes to the BOM between revisions are extracted and presented as additions, deletions, quantity changes, and substitutions. Each BOM change is linked to the corresponding geometry change (if any), making it easy to verify that BOM updates are consistent with design changes.

Change validation against ECO. When an Engineering Change Order (ECO) specifies what changes should be made, DrawingDiff can verify that all specified changes have been implemented and that no unspecified changes were introduced. This is a critical quality check that most companies perform manually and incompletely.

One multinational equipment company reported that after deploying automated drawing comparison, they discovered that 23% of their design revisions contained at least one unintended change, a modification that was not specified in the ECO and was likely introduced accidentally during the revision process. Before automated comparison, these unintended changes were detected only sporadically during manual review.

How Should Companies Implement AI-Assisted Design Review?

Start with revision comparison. This is the lowest-risk, highest-immediate-value application. Deploying automated drawing comparison requires no changes to the design process itself; it simply adds a verification step that catches errors already present. Most companies see measurable quality improvement within the first month.

Build your standards rule set incrementally. Do not attempt to encode all design standards at once. Start with the 20-30 highest-impact rules, typically the SEMI S2 requirements most commonly violated and the company-specific design standards most frequently checked during review. Expand the rule set based on data about which error types are most costly.

Calibrate AI sensitivity with your review team. Work with your reviewers to tune the balance between sensitivity (catching more issues) and specificity (reducing false positives). Too many false positives will cause reviewers to lose trust in the system and start ignoring findings. A false positive rate below 15% is the threshold where reviewers report the AI findings as genuinely helpful rather than annoying.

Measure detection rate improvement explicitly. Track the number and type of errors found during review, during assembly, and during commissioning. The ratio of downstream errors to review-caught errors is your key performance indicator. If this ratio is decreasing over time, your AI-assisted review is working.

Integrate review findings into the design knowledge base. Every error caught during review is a data point about what goes wrong in your design process. Aggregate these findings to identify systematic issues: are certain component types frequently mis-specified? Are certain design zones consistently problematic? Are certain types of customer requirements regularly misinterpreted? This data drives continuous improvement in both the AI review system and the upstream design process.

Design review is not going away. It remains essential as the final verification step before significant resources are committed to procurement and manufacturing. But the nature of review is changing. AI handles the systematic, exhaustive, fatigue-free checking of rules and specifications. Human reviewers focus on judgment, creativity, and the engineering intuition that no algorithm can replicate. Together, they form a review capability that is both faster and more effective than either could achieve alone.

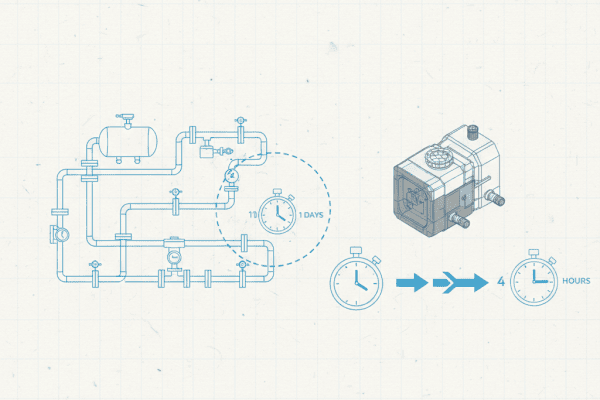

Still designing assemblies manually?

NeuroBox D converts your P&ID into a complete SolidWorks assembly — in hours, not days. See how it works with your own designs.

NeuroBox D generates native SolidWorks 3D assemblies from P&ID in 4 hours. Auto BOM, zero errors.

Book a Demo →See how NeuroBox reduces trial wafers by 80%

From Smart DOE to real-time VM/R2R — our AI runs on your equipment, not in the cloud.

Book a Demo →