- →Why Is Edge Computing Suddenly Critical for Industrial AI?

- →What Makes NVIDIA Jetson the Leading Edge AI Platform?

- →How Are Semiconductor Companies Deploying Jetson-Based AI?

- →What Does the Edge-Cloud Architecture Look Like?

- →What Are the Total Cost of Ownership Implications?

Key Takeaway

The NVIDIA Jetson platform has emerged as the de facto standard for industrial edge AI, with 1.5 million+ developers and deployment in over 1,000 industrial applications. For semiconductor manufacturing, Jetson-based edge nodes deliver sub-10ms inference latency versus 100-500ms for cloud-based alternatives — a difference that enables real-time equipment control impossible with cloud architecture. The combination of NVIDIA hardware and domain-specific AI software is creating a new category of intelligent manufacturing infrastructure.

Why Is Edge Computing Suddenly Critical for Industrial AI?

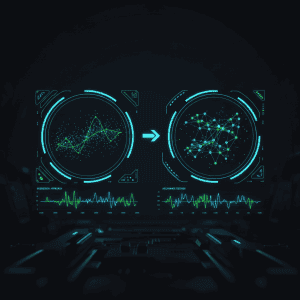

The first wave of industrial AI relied on cloud computing: collect data from factory equipment, send it to AWS or Azure, run ML models, and return predictions. For analytics and reporting use cases, this architecture works acceptably. But for real-time manufacturing control — the applications that deliver 80% of AI’s value in semiconductor fabs — cloud computing hits a fundamental physical limit: latency.

A round-trip from a semiconductor fab in Taiwan to AWS us-east-1 takes 150-300ms. Even with edge-located cloud regions, latency rarely drops below 50-100ms. For applications like run-to-run recipe adjustment, real-time fault detection, or closed-loop process control, the required response time is under 10ms. The physics of network latency makes cloud-based real-time control impossible.

This is not a temporary limitation that faster networks will solve. The speed of light imposes a hard floor on round-trip latency over distance. A signal traveling at light speed takes 67ms just to traverse a fiber optic cable from Taipei to Virginia and back. No amount of 5G, Wi-Fi 7, or network optimization can overcome physics.

The solution is to move AI computation to the edge — inside the fab, physically adjacent to the equipment it controls. And in the edge AI hardware market, one platform has established clear dominance: NVIDIA Jetson.

What Makes NVIDIA Jetson the Leading Edge AI Platform?

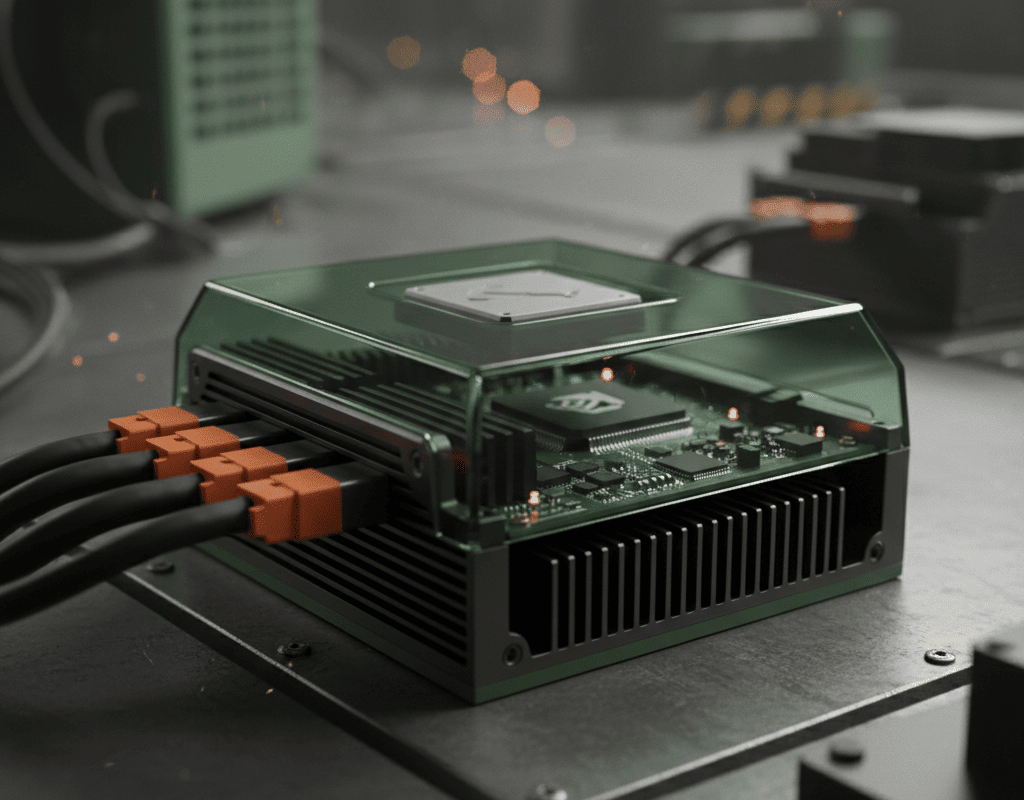

NVIDIA’s Jetson platform — spanning from the entry-level Jetson Orin Nano (40 TOPS, $249) to the Jetson AGX Orin (275 TOPS, $1,999) — has become the preferred hardware for industrial edge AI for several reasons:

Performance per watt. The Jetson AGX Orin delivers 275 trillion operations per second (TOPS) while consuming only 15-60W. For comparison, an equivalent cloud GPU (A100) consumes 300W and requires datacenter infrastructure. In a semiconductor fab where power consumption is already a major concern (a modern 300mm fab consumes 50-100MW), edge nodes that deliver high AI performance at minimal power draw are essential.

Software ecosystem maturity. NVIDIA’s CUDA platform, JetPack SDK, and the broader NGC container catalog provide a complete software stack for industrial AI. Models developed on NVIDIA GPUs in the cloud can be optimized with TensorRT and deployed directly to Jetson without rewriting code. This cloud-to-edge pipeline dramatically reduces deployment friction.

Developer community scale. Over 1.5 million developers actively build on the Jetson platform, with 1,000+ companies offering Jetson-based products. This ecosystem effect means better tools, more pre-trained models, and faster problem-solving. For a semiconductor company evaluating edge AI platforms, the talent availability question alone favors Jetson.

Industrial-grade reliability. Jetson modules are rated for extended temperature ranges (-25C to 80C for industrial variants) and have undergone extensive vibration and shock testing. The Jetson AGX Orin Industrial module is specifically designed for mission-critical applications with 10+ year supply commitment — critical for semiconductor equipment with 15-20 year operational lifespans.

How Are Semiconductor Companies Deploying Jetson-Based AI?

The deployment patterns for Jetson in semiconductor manufacturing fall into four primary use cases:

Use Case 1: Real-Time Equipment Monitoring and FDC. A Jetson edge node connected to equipment via SECS/GEM processes hundreds of sensor channels simultaneously, running fault detection models that identify anomalies in milliseconds. Compared to server-based FDC systems (which introduce 1-5 second latency), edge-deployed FDC catches faults 10-100x faster — the difference between catching a drift on the current wafer versus losing the next 5 wafers.

A typical deployment: one Jetson AGX Orin per 5-10 tools, running 3-5 concurrent FDC models per tool, processing 50,000+ sensor data points per second with end-to-end latency under 8ms. Total hardware cost per tool: $200-$400. Total value per tool: $50,000-$200,000 annually in prevented scrap and downtime.

Use Case 2: Visual Inspection and Defect Classification. Computer vision models running on Jetson classify wafer defects from inline inspection images in real-time. The Jetson’s GPU acceleration handles deep learning inference on high-resolution images (4K+) at 30-60 frames per second — fast enough for inline inspection without creating a bottleneck.

MST’s NeuroBox E5200V leverages exactly this architecture: a Jetson-powered edge node running trained classification models that categorize defect types with 95%+ accuracy, flagging critical defects for immediate engineering review while allowing benign defects to pass without manual inspection.

Use Case 3: Virtual Metrology at the Edge. VM models that predict wafer quality from equipment sensor data are ideal for edge deployment. The model needs sub-second inference to provide predictions before the next wafer starts processing. A Jetson node running an optimized TensorRT model delivers predictions in 2-5ms — orders of magnitude faster than needed, leaving headroom for model complexity growth.

Use Case 4: Autonomous Equipment Control. The most advanced use case: Jetson nodes that make real-time recipe adjustments based on predicted process outcomes. This closed-loop control requires deterministic inference latency (consistently under 10ms) and integration with equipment control systems. Jetson’s hardware schedulers and CUDA real-time extensions provide the deterministic execution environment that general-purpose processors cannot guarantee.

What Does the Edge-Cloud Architecture Look Like?

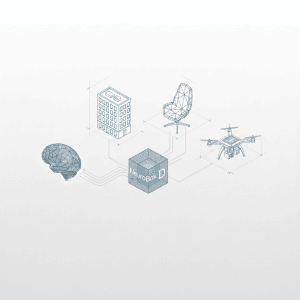

The optimal architecture for semiconductor AI is not pure edge or pure cloud — it is a hierarchical system that puts the right computation at the right location:

Layer 1: Tool Edge (Jetson nodes). One edge node per 5-10 tools, running real-time inference for FDC, VM, and R2R control. These nodes process raw sensor data, execute sub-10ms decisions, and stream summarized data (features, predictions, alerts) to the fab-level aggregator. Hardware: Jetson AGX Orin or Orin NX. Power: 15-40W per node.

Layer 2: Fab-Level Aggregator (Server GPU). A small server cluster (2-4 nodes with NVIDIA L40S or A30 GPUs) aggregates data from all edge nodes, runs cross-tool analytics, performs model training, and hosts dashboards and reporting tools. This layer runs computations that benefit from seeing data across all tools simultaneously — correlation analysis, fab-wide optimization, and model retraining.

Layer 3: Cloud Analytics (optional). For multi-fab organizations, a cloud layer aggregates data from multiple fab-level systems, runs cross-fab benchmarking, and enables transfer learning across sites. This layer has no real-time requirements — batch analytics with hours-to-days latency is sufficient.

This three-layer architecture provides the best of both worlds: real-time control at the edge, cross-tool intelligence at the fab level, and strategic analytics in the cloud. The Jetson ecosystem makes the edge layer cost-effective and reliable, while NVIDIA’s software tools ensure seamless model deployment across all three layers.

What Are the Total Cost of Ownership Implications?

Edge AI deployment fundamentally changes the cost structure of semiconductor manufacturing AI:

Hardware costs drop dramatically. A Jetson AGX Orin ($1,999) replaces a server that would cost $15,000-$30,000 for equivalent inference capability. For a fab deploying AI across 200 tools, the edge approach costs $80,000-$160,000 in hardware versus $600,000-$1,200,000 for a server-based approach. The savings increase further when you factor in eliminated cloud computing charges, which typically run $100,000-$500,000 per year for a large-scale deployment.

Network infrastructure simplifies. Edge nodes communicate with equipment over the existing fab network — no additional network infrastructure required. Cloud-based approaches require high-bandwidth, low-latency connections from the fab to the cloud, often necessitating dedicated links that cost $50,000-$200,000 per year.

Data sovereignty is preserved. Raw equipment data never leaves the fab network. Only summarized features and model parameters are transmitted to external systems. This architecture satisfies even the most restrictive data security requirements — a critical consideration for fabs in regulated jurisdictions or those processing defense-related devices.

Scaling is linear and predictable. Adding AI to a new tool means adding an edge node — a $200-$400 incremental cost per tool. There are no step-function cost increases from needing bigger servers, more cloud instances, or network upgrades. This linear scaling makes budgeting straightforward and eliminates the infrastructure capacity planning that plagues server-based approaches.

Where Is the Edge AI Market Headed?

NVIDIA’s edge AI roadmap continues to push performance boundaries. The next-generation Jetson Thor platform, expected in 2025, will deliver up to 800 TOPS in an edge-deployable form factor — enough to run large language models locally. This opens the possibility of conversational AI interfaces for equipment operators, natural language queries against manufacturing data, and AI agents that can reason about complex production scenarios without cloud connectivity.

For semiconductor manufacturing, the convergence of powerful edge hardware, mature software ecosystems, and proven deployment patterns creates a clear direction: the intelligent fab of the future will run on a mesh of edge AI nodes that provide real-time, autonomous equipment control. The cloud will serve analytics and training, but the real-time decisions that drive 80% of AI value will happen at the edge.

Companies building their AI infrastructure today should architect for this edge-first future. The hardware is available, the software is mature, and the economics are compelling. Every month spent on cloud-dependent AI architectures is a month of delayed value realization and accumulating technical debt.

Discover how MST deploys AI across semiconductor design, manufacturing, and beyond.