- →Why Does Semiconductor Data Demand a Different Security Model?

- →How Does Edge AI Architecture Eliminate These Risks?

- →What Performance Gains Come from Local Processing?

- →How Do Compliance Frameworks Favor Edge Deployment?

- →What Does the Total Cost of Ownership Look Like?

Key Takeaway

Edge AI keeps sensitive semiconductor manufacturing data inside the fab, eliminating cloud-transfer risks while meeting ITAR, EAR, and China CSL compliance requirements. MST’s NeuroBox processes all VM, R2R, and FDC workloads locally — delivering sub-5ms latency and zero data exfiltration exposure.

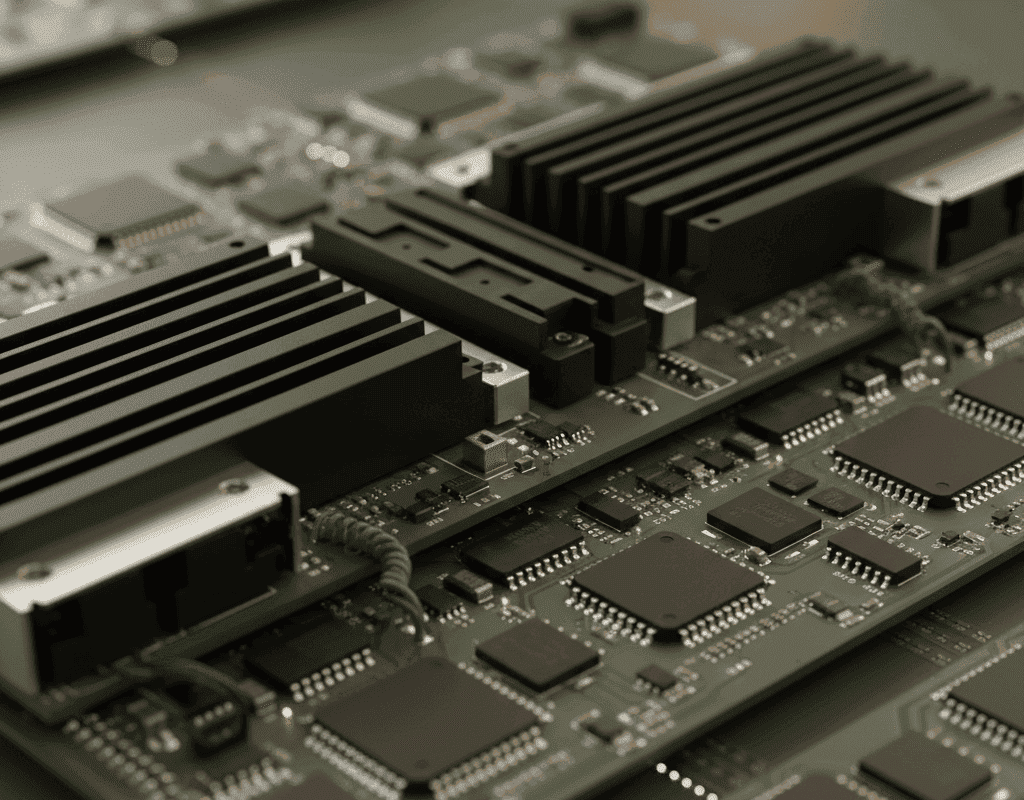

Semiconductor fabs generate between 5 and 30 terabytes of process data every single day. Each wafer pass through a CVD chamber, etch tool, or lithography scanner creates thousands of sensor readings — temperature profiles, RF power curves, gas flow signatures, and endpoint detection traces. This data is the competitive crown jewel of every chipmaker. Losing control of it is not a theoretical risk; it is an existential one.

Yet the prevailing AI deployment model in manufacturing still assumes that production data should flow to a centralized cloud for training and inference. In semiconductor fabrication, that assumption is fundamentally broken.

Why Does Semiconductor Data Demand a Different Security Model?

Unlike retail analytics or social media logs, semiconductor process data is classified by multiple regulatory frameworks. The U.S. International Traffic in Arms Regulations (ITAR) restricts the transfer of certain advanced manufacturing process parameters. The Export Administration Regulations (EAR) impose controls on semiconductor technology sharing. China’s Cybersecurity Law and Cross-Border Data Transfer regulations — updated significantly in 2025 — require that critical manufacturing data remain within sovereign boundaries.

A 2025 Deloitte survey of 200 semiconductor executives found that 78% ranked data sovereignty as a top-three concern when evaluating AI vendors. The reason is straightforward: a single unauthorized data transfer can trigger regulatory penalties exceeding $10 million and, more critically, compromise years of proprietary process IP.

Cloud-based AI systems create multiple vulnerability surfaces. Data in transit — even when encrypted — passes through third-party networks. Data at rest sits on shared infrastructure managed by cloud providers. API endpoints become attack vectors. Every hop between the fab and the cloud is a potential leak.

How Does Edge AI Architecture Eliminate These Risks?

Edge AI inverts the paradigm. Instead of sending data to the model, you bring the model to the data. MST’s NeuroBox series deploys AI inference and model training directly on ruggedized hardware installed inside the fab — typically within the subfab or in the equipment bay.

The architecture is straightforward: NeuroBox connects to equipment via SECS/GEM or EtherCAT, ingests real-time sensor streams, runs inference models for Virtual Metrology, Fault Detection and Classification, and Run-to-Run control, and outputs control commands — all without a single byte leaving the facility network. The entire data lifecycle, from collection to model training to prediction, occurs on-premises.

This is not merely a deployment preference. It is a security architecture decision. With edge processing, the attack surface shrinks to the physical device itself, which sits behind the fab’s existing perimeter security, access control systems, and network segmentation.

What Performance Gains Come from Local Processing?

Security is the primary driver, but performance follows closely. Cloud round-trip latency for AI inference typically ranges from 50 to 200 milliseconds, depending on geographic distance and network congestion. For a Run-to-Run control system adjusting recipe parameters between consecutive wafer lots, that latency is unacceptable.

NeuroBox delivers inference latency under 5 milliseconds — fast enough to adjust parameters within a single process step. In a CVD chamber running at 400°C, a 200ms delay in detecting a gas flow anomaly can mean the difference between a recoverable drift and a scrapped lot worth $50,000 or more.

Field data from MST deployments across 12 production lines shows that edge-deployed FDC models achieve a 23% improvement in early fault detection compared to cloud-connected alternatives, primarily because the models process every sensor reading in real time rather than sampling at reduced frequency to manage bandwidth costs.

How Do Compliance Frameworks Favor Edge Deployment?

Regulatory trends are accelerating in one direction: stricter data localization requirements. The EU Chips Act, signed into force in 2023, encourages data sovereignty for semiconductor manufacturing within the European Union. South Korea’s Personal Information Protection Act (PIPA) has been interpreted to cover manufacturing process data that could reveal trade secrets. Taiwan’s National Security Act amendments in 2024 explicitly restricted the cross-border transfer of advanced semiconductor process data.

For multinational fabs operating across jurisdictions, edge AI provides a compliance-by-architecture approach. Each facility runs its own NeuroBox instances with locally trained models. There is no cross-border data flow to audit, no data processing agreements to negotiate with cloud vendors, and no dependency on foreign infrastructure.

MST’s architecture supports federated learning where needed: model improvements from one facility can be shared as parameter updates (weights and gradients) without transmitting raw process data. This preserves the learning benefits of multi-site deployment while maintaining strict data isolation.

What Does the Total Cost of Ownership Look Like?

The financial case for edge AI strengthens at scale. Cloud AI costs for a typical 300mm fab — including compute, storage, and data transfer — range from $400,000 to $1.2 million annually, according to McKinsey’s 2025 analysis of semiconductor IT spending. These costs scale linearly with data volume and model complexity.

NeuroBox hardware, by contrast, represents a fixed capital expense with a 5-year operational lifecycle. The total cost of ownership over five years is typically 40-60% lower than equivalent cloud deployments, with the savings gap widening as data volumes grow. There are no ingress/egress charges, no reserved instance negotiations, and no surprise bills when a new process tool doubles sensor data output.

Maintenance is also simplified. NeuroBox runs embedded Linux with over-the-air model updates delivered through a secure, air-gapped channel. Fab IT teams manage the device with standard industrial protocols rather than requiring cloud-native DevOps expertise.

Why Is the Industry Moving Toward Edge-First AI?

The shift is already measurable. Gartner projected that by 2026, 65% of new AI deployments in semiconductor manufacturing will be edge-first or edge-only, up from 28% in 2023. The drivers are converging: tighter regulations, higher data volumes, lower latency requirements, and increasing sophistication of on-device AI accelerators.

MST has been building for this reality since its founding. Every product in the NeuroBox line — from the E5200 for equipment commissioning to the E3200 for production-line control — is designed as an edge-native system. The AI models run where the data lives, the decisions happen where the equipment operates, and sensitive process IP never leaves the building.

For semiconductor companies evaluating AI partners, the question is no longer whether to adopt AI. It is whether your AI architecture protects the data that defines your competitive advantage. Edge AI is not just a deployment option — it is the only architecture that aligns security, performance, compliance, and cost in a single solution.

Discover how MST deploys AI across semiconductor design, manufacturing, and beyond.