- →Why Does Deployment Architecture Matter So Much in Semiconductor AI?

- →What Are the Performance Implications of Each Architecture?

- →How Do Security Requirements Shape the Decision?

- →When Does Cloud AI Make Sense for Semiconductor Companies?

- →What Does a Hybrid Architecture Look Like?

Key Takeaway

For real-time process control in semiconductor fabs, on-premise AI delivers sub-10ms inference latency and keeps sensitive recipe and yield data within the facility perimeter — both non-negotiable requirements for most fabs. Cloud AI excels at large-scale model training and cross-fab analytics. Platforms like NeuroBox deploy 100% on-premise for production inference while optionally supporting cloud-based model training for organizations that permit it, offering the security of edge deployment with the power of cloud-scale learning.

Why Does Deployment Architecture Matter So Much in Semiconductor AI?

In most industries, the cloud vs on-premise debate is primarily about cost and convenience. In semiconductor manufacturing, it becomes a question of data security, regulatory compliance, and physics — specifically, the physics of latency.

A Run-to-Run (R2R) control system must calculate recipe adjustments between wafer runs. In high-throughput environments, this means inference must complete in under 100 milliseconds. A cloud round-trip — even to a nearby region — typically adds 20-80ms of network latency, not counting potential congestion, DNS resolution, or TLS handshake overhead. For real-time control loops, this latency is unacceptable.

Then there is the data question. Semiconductor process recipes represent billions of dollars in R&D investment. Yield data reveals competitive positioning. Equipment sensor patterns can expose proprietary process innovations. According to a 2024 SEMI survey, 83% of semiconductor companies cite data security as the primary barrier to cloud AI adoption in manufacturing environments.

What Are the Performance Implications of Each Architecture?

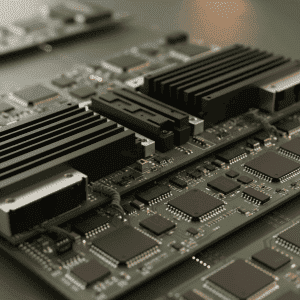

On-Premise Performance: Local inference on modern edge hardware (NVIDIA Jetson, Intel Xeon with OpenVINO, or dedicated FPGA accelerators) delivers inference latency of 1-10ms for typical process control models. Data ingestion from SECS/GEM streams happens over local network with sub-millisecond latency. There is no dependency on internet connectivity — the system continues operating even during network outages.

Cloud Performance: Cloud platforms offer virtually unlimited compute for model training — important for complex deep learning architectures that require GPU clusters. Inference latency ranges from 30-150ms depending on region proximity and model complexity. Batch processing and historical analytics are cloud strengths, as storage and compute scale elastically.

The performance difference is clearest in real-time applications. Virtual metrology models predicting critical dimensions in-situ, FDC systems monitoring chamber health in real-time, and R2R controllers adjusting parameters between runs all require the low-latency, high-reliability characteristics of on-premise deployment.

How Do Security Requirements Shape the Decision?

Semiconductor data security is governed by a combination of customer requirements, regulatory frameworks, and competitive strategy:

Customer Mandates: Major IDMs (Integrated Device Manufacturers) and foundries often contractually prohibit their suppliers and partners from transmitting process data outside the facility. Equipment OEMs serving these customers must demonstrate that AI systems process data locally.

Regional Regulations: China’s Data Security Law and Personal Information Protection Law impose strict requirements on cross-border data transfer. The EU’s GDPR and proposed AI Act add compliance layers. U.S. CHIPS Act funding includes provisions about data handling. For multinational fabs, on-premise deployment is the simplest path to multi-jurisdictional compliance.

IP Protection: Process recipes, equipment configurations, and yield data are core intellectual property. A single data breach could expose competitive advantages worth hundreds of millions of dollars. On-premise deployment reduces the attack surface by eliminating cloud API endpoints, internet-facing storage, and third-party cloud provider access.

NeuroBox addresses this directly by deploying all production systems on-premise with air-gap capability. The platform operates entirely within the fab’s network perimeter — no cloud connection required for inference, monitoring, or control actions. Model updates can be loaded via secure local transfer when air-gap operation is required.

When Does Cloud AI Make Sense for Semiconductor Companies?

Despite the strong case for on-premise production deployment, cloud AI has legitimate roles in semiconductor manufacturing:

Model Training: Training complex deep learning models (transformers, large CNNs) can require GPU clusters that are expensive to maintain on-premise. A fab might train models in a secure cloud environment using anonymized or synthetic data, then deploy the trained model weights on-premise. This hybrid approach captures cloud compute advantages without exposing production data.

Cross-Fab Analytics: Organizations with multiple fabs can aggregate anonymized performance metrics in the cloud to identify best practices, benchmark equipment performance, and train transfer learning models. This is particularly valuable for equipment OEMs who support tools across multiple customer sites.

Non-Critical Applications: Supply chain optimization, spare parts forecasting, energy management analytics, and other non-real-time applications often have relaxed latency and security requirements that make cloud deployment practical and cost-effective.

Development and Experimentation: Data science teams benefit from cloud-based notebook environments and elastic compute for model experimentation. Using cloud resources for R&D — with synthetic or anonymized data — accelerates innovation without compromising production security.

What Does a Hybrid Architecture Look Like?

The most sophisticated semiconductor AI deployments use a hybrid architecture that captures the best of both worlds:

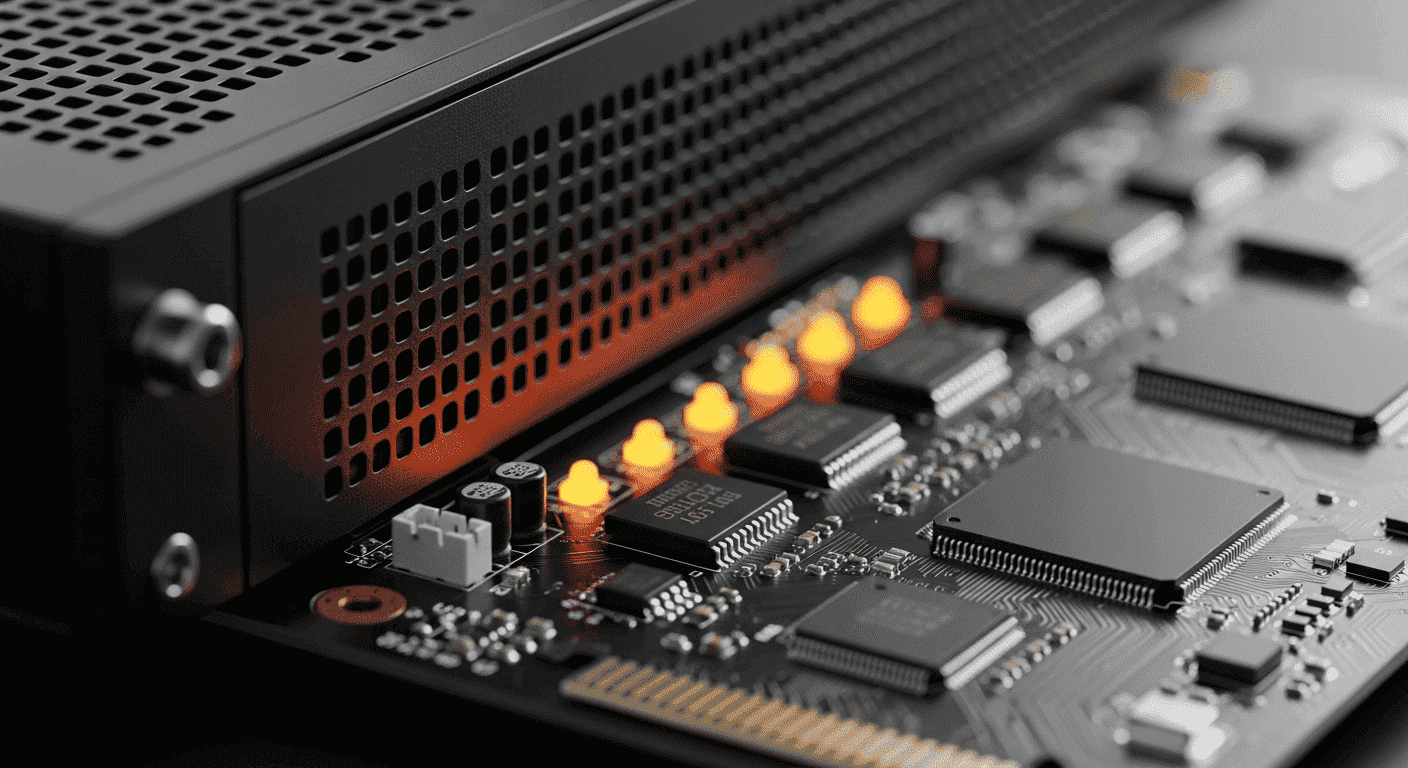

Edge Layer (On-Premise): Real-time inference, data ingestion, control actions, and local data storage. This is where NeuroBox E3200 operates — directly connected to equipment via SECS/GEM, running VM, R2R, and FDC models with sub-10ms latency.

Plant Layer (On-Premise or Private Cloud): Model management, performance monitoring, historical analytics, and model retraining on local GPU resources. The NeuroBox management console runs at this layer, providing dashboards, model lifecycle management, and integration with MES/ERP systems.

Enterprise Layer (Cloud or Private Cloud): Cross-fab benchmarking, supply chain analytics, executive dashboards, and non-sensitive reporting. Only aggregated, anonymized metrics flow to this layer.

This three-tier architecture ensures that sensitive process data never leaves the facility while still enabling enterprise-scale analytics and management. The key architectural principle is that data classification drives deployment tier — real-time production data stays on-premise, aggregated business metrics can go to the cloud.

How Should You Evaluate Vendors on Deployment Flexibility?

When comparing semiconductor AI platforms, ask these deployment-specific questions:

Can the platform run fully on-premise with zero cloud dependency? Some vendors require cloud connectivity for licensing validation, model updates, or telemetry. NeuroBox operates independently on-premise with no mandatory cloud connection.

What is the inference latency in production configuration? Request benchmark data from actual fab deployments, not lab tests. Sub-10ms is the target for real-time control applications.

How are model updates handled in air-gapped environments? Secure USB transfer, one-way data diodes, or manual deployment procedures should be supported for the most security-sensitive installations.

What data leaves the facility, if any? Demand a complete data flow diagram showing every external connection. Any cloud telemetry, even anonymized, should be optional and auditable.

The deployment architecture decision will shape your AI capabilities for years. On-premise remains the foundation for semiconductor production AI, with cloud serving specific, well-controlled supplementary roles. Choose a platform that makes on-premise the default, not an afterthought.

Discover how MST deploys AI across semiconductor design, manufacturing, and beyond.