- →Why Is Equipment Commissioning So Expensive in Semiconductor Manufacturing?

- →What Is Transfer Learning and How Does It Apply to Equipment AI?

- →How Does the Wafer Count Drop from 15 to 2?

- →Why Is This a Strategic Asset for Equipment Makers?

- →What Technical Challenges Must Be Overcome?

Key Takeaway

Transfer learning enables semiconductor equipment makers to reduce AI model commissioning from 15+ test wafers to as few as 2, cutting setup time by 85% and transforming every deployment into accumulated AI intellectual property that makes subsequent installations faster and more accurate.

Why Is Equipment Commissioning So Expensive in Semiconductor Manufacturing?

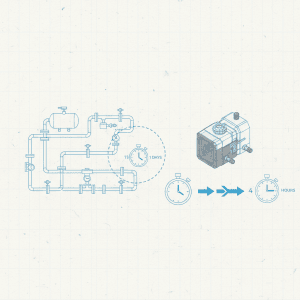

Every time a semiconductor equipment maker installs a new tool at a customer fab, the commissioning process begins. Engineers must run test wafers — often called qualification wafers or qual wafers — to verify the equipment meets process specifications. For AI-enabled equipment with machine learning models, this qualification extends to training or calibrating the onboard models to the specific fab environment.

The traditional approach requires 15-25 test wafers per process recipe to build a statistically valid training dataset. At advanced nodes, each 300mm test wafer costs $500-2,000 depending on the layer and process step. A single equipment commissioning consuming 20 wafers at $1,000 each represents $20,000 in material costs alone — before accounting for engineer time, fab downtime, and metrology resources.

For an equipment maker installing 50-100 tools per year globally, the aggregate commissioning cost reaches $1-2 million annually in test wafers alone. More critically, each commissioning takes 3-5 days of productive fab time, during which the tool generates zero revenue for the customer. This is a cost that directly impacts customer satisfaction and repeat orders.

What Is Transfer Learning and How Does It Apply to Equipment AI?

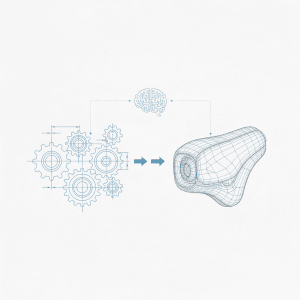

Transfer learning is a machine learning technique where a model trained on one task is adapted to a related but different task using minimal additional data. In computer vision, this is routine — models pre-trained on millions of images can be fine-tuned for a specific application with just hundreds of examples. In semiconductor equipment AI, the principle applies with even greater leverage.

Consider a Virtual Metrology model for a CVD tool. The fundamental physics — how temperature, pressure, and gas flows affect film deposition — is consistent across all installations of the same tool type. What varies between installations is the specific calibration: slightly different chamber geometry tolerances, local gas supply purity, facility environmental conditions, and incoming wafer characteristics.

Transfer learning exploits this structure. A base model is trained on comprehensive data from multiple previous installations (the source domain), learning the general process physics. When deploying to a new installation (the target domain), only the site-specific calibration layer needs updating — and this requires far fewer data points.

In practice, the transfer works in three stages:

Pre-Training: Train a deep model on aggregated data from 5-20 previous installations of the same equipment type. This model captures shared process dynamics, sensor correlations, and equipment behavior patterns. The pre-training happens once and improves with each new installation added to the training pool.

Feature Extraction: Freeze the lower layers of the pre-trained model (which capture general physics) and replace only the final prediction layers (which encode site-specific calibration).

Fine-Tuning: Train the new prediction layers using 2-5 test wafers from the new installation. Because the model already understands the general process, it only needs to learn the specific offset — a dramatically simpler task that requires 85-90% fewer data points.

How Does the Wafer Count Drop from 15 to 2?

The mathematics behind the reduction is straightforward. A from-scratch model for a CVD Virtual Metrology application typically has 50-200 learnable parameters mapping sensor features to metrology predictions. Training this model from zero requires enough data points to avoid overfitting — the statistical rule of thumb is 5-10 observations per parameter, yielding 250-2,000 required data points, which translates to 15-25 measured wafers (each providing 10-100 data points depending on measurement density).

With transfer learning, the pre-trained model already has correct values for 90-95% of its parameters. Only the final calibration layer — typically 5-15 parameters — needs updating. This requires only 25-150 new data points, achievable with 2-5 wafers. The statistical validity is maintained because the prior knowledge from previous installations acts as a strong regularizer, preventing overfitting even with minimal new data.

Real-world validation across MST NeuroBox deployments shows consistent results: models fine-tuned with just 2-3 wafers achieve prediction accuracy within 5% of models trained from scratch with 15-20 wafers. For non-critical applications, a single wafer can provide sufficient calibration, though 2-3 wafers are recommended for robust confidence intervals.

Why Is This a Strategic Asset for Equipment Makers?

Transfer learning creates a compounding competitive advantage that traditional equipment makers have not yet recognized:

AI Asset Accumulation: Every installation adds data to the pre-training pool. After 10 installations, the base model is good. After 50, it is excellent. After 200, it captures virtually every operating condition the equipment will encounter. This is a moat — competitors starting from scratch cannot replicate 200 installations worth of learned knowledge overnight.

Faster Time-to-Production: Reducing commissioning from 5 days to 1 day is a powerful sales argument. Fab managers care deeply about tool utilization, and every day saved in commissioning is a day of productive output gained. At a tool revenue of $50,000 per day, saving 4 days of commissioning is worth $200,000 to the customer — a tangible, quantifiable value proposition.

Service Revenue Model: The pre-trained model library becomes a licensable asset. Equipment makers can offer tiered AI packages: basic (no transfer learning, longer commissioning), standard (transfer learning from 20-installation base), premium (transfer learning from 100+ installations plus continuous model updates). This creates recurring software revenue on top of hardware sales.

Customer Lock-In: Once a fab has 10-20 tools running with transfer-learned models from one vendor, switching to a competitor means losing all accumulated process knowledge. The models become part of the fab operational infrastructure, creating stickiness that hardware specifications alone cannot achieve.

What Technical Challenges Must Be Overcome?

Transfer learning in semiconductor manufacturing is not without complications:

Domain Shift: When the target installation operates under conditions significantly different from any source installation — a new process chemistry, extreme operating regime, or novel substrate — the pre-trained model features may not transfer effectively. Detecting domain shift automatically and falling back to from-scratch training when needed is essential for production reliability.

Data Privacy Across Customers: The pre-training pool contains data from multiple customer fabs. Even though the model weights do not directly expose raw data, customers are justifiably cautious. Federated learning approaches — where each installation contributes model updates rather than raw data — address this concern while preserving transfer learning benefits.

Model Versioning: As the pre-trained base model improves with new installations, existing deployments must decide whether to update. Updating brings accuracy gains but requires re-validation. A robust model lifecycle management system — tracking versions, compatibility, and upgrade paths — is necessary infrastructure.

Process Changes: When a customer changes a recipe or process chemistry, the fine-tuned model may need recalibration. Ideally, the system detects the change automatically (via sensor signature shifts) and requests minimal additional wafers for re-tuning rather than full retraining.

How Does This Reshape the Equipment Business Model?

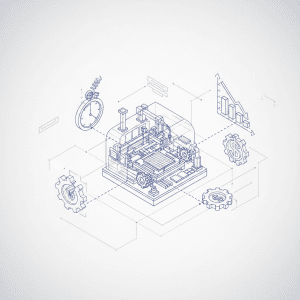

Transfer learning is a catalyst for the broader transformation of semiconductor equipment from hardware products to intelligent platforms:

Hardware + AI Software Bundling: Equipment makers sell the tool with embedded AI that improves over time. Initial pricing includes the hardware and base AI capability; annual subscriptions cover model updates, expanded features, and access to the growing pre-training library.

Performance Guarantees: With transfer learning, equipment makers can confidently guarantee process performance metrics at installation — backed by data from hundreds of previous deployments. This shifts the commercial conversation from equipment specifications to outcome guarantees.

Predictive Fleet Management: The same transfer learning infrastructure enables equipment makers to monitor their entire installed base, predict maintenance needs, and optimize spare parts inventory. Each tool becomes a node in an intelligent network, with insights flowing bidirectionally between the vendor and the field.

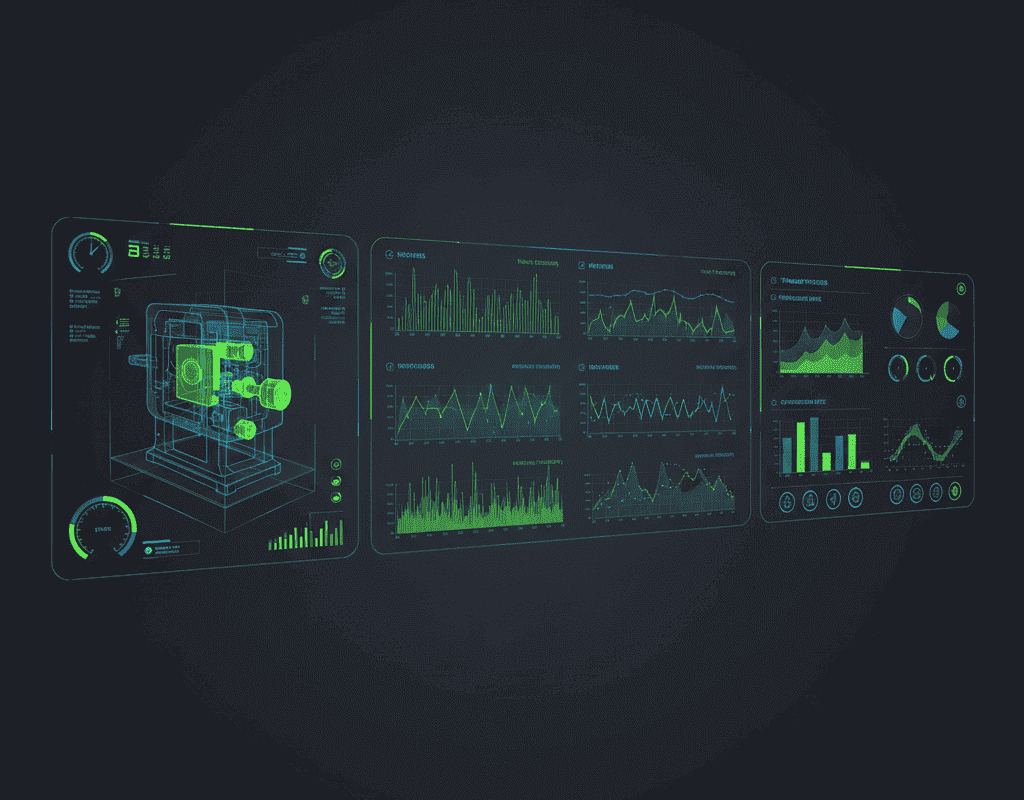

MST NeuroBox E5200 implements this vision with built-in transfer learning that leverages MST accumulated deployment data across Asia. Each new installation benefits from every previous one, creating a flywheel where the product literally gets better with every sale — a dynamic that hardware-only competitors cannot replicate.

For equipment makers evaluating their AI strategy, transfer learning is not optional. It is the mechanism that transforms isolated AI experiments into a scalable, defensible business asset. The companies that begin accumulating this AI intellectual property today will have an insurmountable advantage in 3-5 years, when every fab expects intelligent, self-commissioning equipment as the baseline.

Reduce trial wafer consumption by 80% with AI-powered Smart DOE.