- →Why Do Generic Design Templates Fail in Equipment Manufacturing?

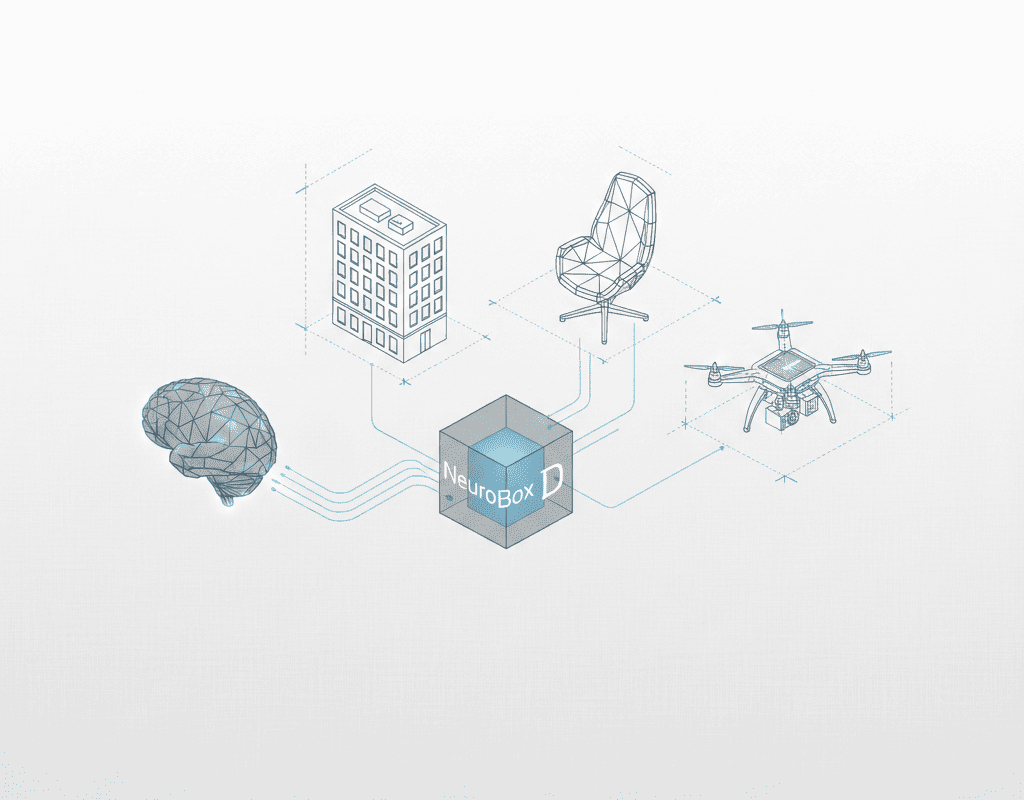

- →How Does NeuroBox D Extract Design Patterns From Historical Assemblies?

- →What Is the Knowledge Graph Architecture Behind NeuroBox D?

- →How Does the AI Handle Conflicting Design Rules and Edge Cases?

- →How Does Continuous Learning Improve Design Quality Over Time?

Key Takeaway

NeuroBox D does not apply generic design templates — it learns each companys unique design standards, component preferences, and layout patterns from their historical assembly data. By training on 50-200 existing SolidWorks assemblies, the AI internalizes the implicit design rules that experienced engineers follow instinctively, then applies them consistently to every new design. This company-specific learning is what separates NeuroBox D from traditional parametric CAD automation.

Why Do Generic Design Templates Fail in Equipment Manufacturing?

The semiconductor equipment industry has experimented with design automation for decades. Parametric CAD templates, design tables in SolidWorks, and macro-based automation tools have all been deployed with varying degrees of success. Yet most equipment companies still rely heavily on manual 3D modeling. Why?

The answer lies in the gap between generic and company-specific design knowledge. Every equipment OEM develops its own design language over years of engineering practice. This language includes:

- Preferred component brands and specific part numbers for each application

- Standard frame dimensions and mounting configurations

- Tube routing conventions (which side, what elevation, how bends are oriented)

- Labeling and color-coding standards

- Clearance rules that exceed industry minimums based on field service experience

- Assembly structure conventions (how the SolidWorks feature tree is organized)

A 2024 survey by ASME found that 73% of mechanical design engineers reported that their companys design standards include significant undocumented knowledge — rules that exist only in the heads of senior engineers. Traditional automation tools cannot capture this knowledge because they rely on explicitly programmed rules. If the rule is not documented, it cannot be automated.

NeuroBox D takes a fundamentally different approach. Instead of requiring engineers to document every rule, it learns the rules from existing designs.

How Does NeuroBox D Extract Design Patterns From Historical Assemblies?

The learning process begins with data ingestion. Engineers provide NeuroBox D with a set of historical SolidWorks assemblies — typically 50-200 assemblies that represent the companys current design practices. These are assemblies that have been manufactured, installed, and validated in the field — they represent the ground truth of what good design looks like at that company.

NeuroBox Ds learning engine analyzes these assemblies across multiple dimensions:

Component Selection Patterns. The AI catalogs which specific components are used for each function. For example, it learns that this company always uses Swagelok 6LVV-DPHFR4-P-C series diaphragm valves for high-purity gas isolation, Parker Veriflo R26 series regulators for pressure reduction above 2000 psi, and Mott HyPulse sintered metal filters for 0.003 micron particulate filtration. These preferences are captured as conditional probability distributions — given a specific function, pressure rating, gas service, and connection size, the system predicts the most likely component with 92-96% accuracy after training on 100+ assemblies.

Spatial Layout Conventions. The AI maps the 3D positions of components in each assembly and identifies recurring spatial relationships. It learns that this company positions regulators at 1200mm from the floor, places MFCs at the same elevation in horizontal rows, routes primary process tubing on the left side of the panel, and maintains 75mm minimum spacing between parallel gas lines (rather than the 50mm industry minimum). These patterns are encoded as spatial probability fields — for any given component type, the system can predict where it should be placed in 3D space relative to the frame and adjacent components.

Routing Conventions. Tube routing follows company-specific conventions that are rarely documented. NeuroBox D learns these by analyzing the actual tube paths in historical assemblies. It discovers that this company always routes tubes with a minimum 10-degree downward slope toward the process chamber (for drainage), uses 90-degree bends at specific grid points (for visual consistency), and maintains a 25mm offset between parallel tubes in horizontal runs. The system captures over 40 routing parameters from the training data.

Assembly Structure Standards. Even the organization of the SolidWorks feature tree follows company conventions. NeuroBox D learns how assemblies are structured — subassembly groupings, naming conventions, configuration management approaches, and display state definitions. The output assemblies match the companys CAD standards without manual restructuring.

What Is the Knowledge Graph Architecture Behind NeuroBox D?

The learned design knowledge is stored in a proprietary knowledge graph — a structured representation that connects components, functions, spatial relationships, and design rules in a queryable network.

The knowledge graph contains four primary entity types:

Function Nodes: Abstract functions like “gas isolation,” “pressure regulation,” “flow measurement,” and “particulate filtration.” Each function node links to the components that can fulfill it, ranked by the companys historical preference.

Component Nodes: Specific parts with full technical specifications, 3D models, and connection interfaces. Each component node links to the functions it can serve, the components it commonly connects to, and the spatial positions it typically occupies.

Constraint Nodes: Design rules extracted from the training data — clearance requirements, orientation rules, compatibility restrictions, and performance targets. Constraints are classified as hard constraints (physically required, like minimum bend radius) or soft constraints (preferred but negotiable, like standard mounting heights).

Context Nodes: Environmental factors that influence design decisions — gas type, pressure class, purity requirement, panel size, and installation location. Context nodes enable the AI to generate designs appropriate for different applications using the same underlying knowledge base.

The graph currently contains over 2.3 million relationships across its standard knowledge base, with each customer deployment adding 50,000-200,000 company-specific relationships during the learning phase.

How Does the AI Handle Conflicting Design Rules and Edge Cases?

Real-world design involves trade-offs. A layout that minimizes tube length may compromise service accessibility. A component placement that optimizes flow performance may violate thermal clearance requirements. Experienced engineers navigate these conflicts intuitively — a skill developed over years of practice.

NeuroBox D handles design conflicts through a weighted constraint satisfaction framework. Each constraint carries a priority weight learned from the training data. When constraints conflict, the system resolves the conflict according to the priority hierarchy that the companys own designs implicitly define.

For example, if the training data shows that the company consistently prioritizes service accessibility over tube length minimization (evidenced by longer tube runs that provide better access), NeuroBox D learns this preference and applies it to new designs. The priority weights are transparent and adjustable — engineering managers can review the learned priorities and modify them if the companys standards have changed.

Edge cases — unusual component combinations, non-standard frame sizes, or novel gas services — are handled through a confidence scoring mechanism. When the AI encounters a design requirement that falls outside its training distribution, it flags the affected area with a confidence score below the companys acceptance threshold (typically 85%). These flagged areas require human engineer review and decision-making, while the remainder of the design proceeds automatically.

In practice, early deployments show that 85-95% of a typical design is generated with high confidence, leaving 5-15% for human review. This ratio improves as more designs are completed and fed back into the training data.

How Does Continuous Learning Improve Design Quality Over Time?

NeuroBox Ds learning process does not stop after initial deployment. Every design that the system generates and an engineer approves becomes new training data. Every modification that an engineer makes to an AI-generated design is captured as a correction signal — evidence that the current model deviated from the engineers intent.

This feedback loop operates at three levels:

Level 1: Immediate Corrections. When an engineer moves a component, changes a routing path, or swaps a part in an AI-generated design, the system records the delta between the original and modified versions. These corrections are weighted heavily in the next model update because they represent direct expert feedback.

Level 2: Design Review Outcomes. When a design passes formal review without changes, this is a strong positive signal. When a design requires rework, the specific changes requested are captured and analyzed. Over 6-12 months, these signals refine the models understanding of what constitutes an approvable design at this company.

Level 3: Field Feedback Integration. When field service teams report issues with specific designs — difficult-to-service component placements, tube routing that interferes with adjacent systems, or clearance issues discovered during installation — this feedback is mapped back to the design parameters that caused the problem. NeuroBox D then adjusts its constraint weights to avoid similar issues in future designs.

Companies that have used NeuroBox D for 12 months or more report that the percentage of AI-generated designs requiring significant manual modification decreases from 30-40% in the first month to under 10% by month 12. The system literally gets better at designing for your company every week.

What Does This Mean for Design Teams and Engineering Leadership?

The implications of company-specific AI learning extend beyond productivity gains. When NeuroBox D learns your design standards, it effectively creates a digital twin of your engineering expertise — a system that can replicate the design decisions of your best engineers at scale, consistently, and without the knowledge loss that occurs when experienced engineers retire or change companies.

For engineering leadership, this addresses one of the most persistent challenges in equipment manufacturing: institutional knowledge preservation. The ASME survey found that 67% of engineering managers consider knowledge retention their top workforce concern, ahead of hiring and training. NeuroBox D transforms tribal knowledge into codified, reusable design intelligence.

For design engineers, the shift is equally significant. Rather than spending 80% of their time on repetitive assembly tasks, engineers become design reviewers and innovation drivers. They focus on novel challenges — new process requirements, advanced materials, next-generation architectures — while the AI handles the routine work of translating established patterns into 3D models.

The transition from manual design to AI-augmented design is not about replacing engineers. It is about amplifying their impact by ensuring that every new design benefits from the accumulated knowledge of every design that came before it. That is what company-specific AI learning delivers, and it is why NeuroBox D produces designs that look and feel like they were created by your own team — because, in a very real sense, they were.

Still designing assemblies manually?

NeuroBox D converts your P&ID into a complete SolidWorks assembly — in hours, not days. See how it works with your own designs.

See how NeuroBox D converts P&ID to native SolidWorks assemblies in hours, not weeks.