- →Why Is P and ID Parsing the Critical First Step in AI Design Automation?

- →How Do Computer Vision Models Identify P and ID Symbols?

- →How Does the System Trace Connections Between Symbols?

- →How Is the Extracted Data Structured for Downstream Use?

- →What Are the Current Limitations and How Are They Being Addressed?

Key Takeaway

Modern computer vision systems can parse P and ID diagrams with 97-99% symbol recognition accuracy and 94-96% connectivity extraction accuracy. The technology combines object detection models (for symbols), line tracing algorithms (for connections), and OCR (for annotations) to convert engineering diagrams into machine-readable graph representations that drive downstream AI design automation.

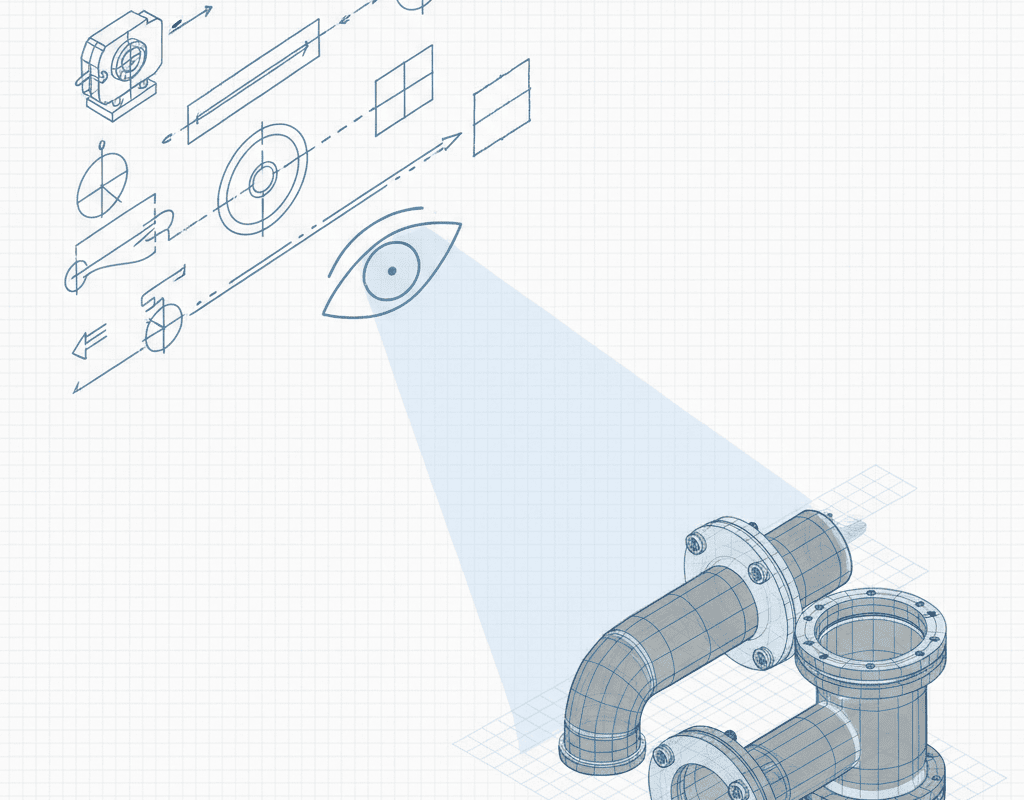

Why Is P and ID Parsing the Critical First Step in AI Design Automation?

Every automated equipment design workflow begins with the same fundamental challenge: the engineering intent captured in a P and ID must be converted into a machine-readable format that downstream systems can use. A P and ID is essentially a graph: nodes (symbols representing valves, instruments, vessels, and other components) connected by edges (lines representing pipes, tubes, electrical signals, and pneumatic connections). But this graph is encoded as a visual document, not as structured data.

For decades, this translation from visual document to actionable data was performed entirely by human engineers who read the P and ID and manually entered information into CAD systems, BOMs, and instrument databases. This manual translation is slow, error-prone (human transcription error rates average 2-5% per data element), and represents a fundamental bottleneck in equipment design automation.

AI-powered P and ID reading changes this equation by automating the extraction of structured data from engineering diagrams. The technology has matured significantly since 2022, driven by advances in object detection, graph neural networks, and domain-specific training data. Here is how it works at a technical level.

How Do Computer Vision Models Identify P and ID Symbols?

P and ID symbols follow standardized vocabularies defined by ISA 5.1 (the international standard) and various company-specific or customer-specific symbol sets. A typical semiconductor equipment P and ID uses 40-80 distinct symbol types, including gate valves, globe valves, ball valves, check valves, pneumatic actuators, solenoid actuators, mass flow controllers, pressure regulators, pressure transmitters, temperature sensors, flow switches, filters, and various vessel types.

Modern P and ID parsing systems use convolutional neural network (CNN) based object detection models, typically architectures derived from YOLO (You Only Look Once) or Faster R-CNN, fine-tuned on P and ID training data. The detection pipeline operates in several stages:

Image preprocessing. The input P and ID (typically a PDF or image file) is processed to normalize resolution, correct skew, enhance contrast, and segment the drawing area from title blocks and revision tables. For multi-sheet P and IDs, each sheet is processed independently and then linked through off-page connectors.

Symbol detection and classification. The object detection model scans the preprocessed image and identifies bounding boxes around each symbol, assigning a class label (valve type, instrument type, etc.) and a confidence score. State-of-the-art models achieve mean Average Precision (mAP) of 0.94-0.97 on standard ISA 5.1 symbol sets and 0.91-0.95 on company-specific symbol sets after fine-tuning with 200-500 annotated examples per symbol class.

Orientation and variant detection. Beyond identifying a symbol as a valve, the system must determine its orientation (horizontal or vertical), actuation type (manual, pneumatic, solenoid, motor-operated), and failure mode (normally open, normally closed) based on visual modifiers to the base symbol. This is handled by secondary classification networks that analyze the detected symbol region at higher resolution.

Instrument tag extraction. Each instrument symbol has an associated tag number (e.g., PT-101, FIC-203) and often additional text annotations (range, material, size). OCR models specialized for engineering drawing fonts extract these text elements and associate them with the nearest symbol through spatial proximity analysis.

The resulting output is a list of detected components, each with a symbol class, position coordinates, orientation, and associated text annotations. For a 12-sheet P and ID package with 600 symbols, this detection process takes 45-90 seconds on modern GPU hardware.

How Does the System Trace Connections Between Symbols?

Identifying symbols is only half the problem. The P and ID encodes connectivity through lines that represent physical connections: process piping, instrument signal lines, pneumatic supply lines, and electrical connections. Extracting this connectivity graph is arguably more challenging than symbol detection because lines in P and IDs frequently cross, branch, change direction, and run in close proximity to each other.

Connection tracing uses a combination of image processing and graph construction algorithms:

Line detection. After symbols are detected and masked (removed from the image), the remaining image contains primarily lines and text. Morphological operations and the Hough transform identify line segments, which are then classified by type based on line style (solid for process lines, dashed for instrument signal lines, dash-dot for pneumatic lines) and weight (heavier lines for main process flows, lighter lines for utility connections).

Junction resolution. Where lines cross or branch, the system must determine whether the intersection represents a physical connection (a tee or branch) or merely a visual crossing (where two independent lines overlap on the drawing). This is resolved through a combination of junction symbol detection (tee symbols, crossing dots) and geometric analysis of line angles at the intersection point.

Path tracing. Starting from each symbol connection point, the algorithm traces along detected line segments, following the path through junctions and corners until it reaches another symbol connection point. The result is a set of edges connecting pairs of symbols, forming the connectivity graph.

Line specification extraction. Process lines on P and IDs carry specification annotations (line size, material, insulation class) that define the physical characteristics of the piping or tubing. OCR and spatial analysis extract these annotations and associate them with the corresponding line segments.

Current systems achieve 94-96% accuracy on connectivity extraction for semiconductor equipment P and IDs. The remaining 4-6% error rate is concentrated in areas of high visual complexity: dense regions where multiple lines run in parallel with minimal spacing, and intersections where crossing and connecting lines are difficult to distinguish visually even for experienced human readers.

How Is the Extracted Data Structured for Downstream Use?

The raw output of symbol detection and connectivity tracing is converted into a structured graph representation that serves as the input for downstream design automation. This graph follows a standardized schema:

Nodes represent components, each with properties including symbol class, instrument tag, specifications (size, material, pressure rating, actuation type), and P and ID sheet location.

Edges represent connections, each with properties including line type (process, signal, pneumatic), line specification (size, material, insulation), and flow direction (when indicated by arrows or implied by process logic).

Metadata includes sheet-level information (revision number, drawing scale, title block data) and package-level information (cross-sheet connector mapping, legend definitions).

This graph representation is functionally equivalent to the mental model that an experienced process engineer holds when reading a P and ID but encoded in a format that software systems can process. It enables automated operations that would be extremely tedious to perform manually: checking that every connection is complete, verifying that every safety interlock is present, ensuring that instrument specifications match process conditions, and generating component lists with full specifications.

What Are the Current Limitations and How Are They Being Addressed?

Despite impressive accuracy, AI P and ID parsing has known limitations that practitioners should understand:

Non-standard symbols. Some equipment companies use custom symbols not found in ISA 5.1 or other standards. These require company-specific training data. NeuroBox D addresses this through a calibration phase where the system is fine-tuned on a companys historical P and ID library, typically requiring 100-300 annotated examples to achieve production-level accuracy on custom symbol sets.

Hand-drawn annotations. Customer markups, red-line revisions, and hand-drawn additions to printed P and IDs present challenges for symbol recognition and OCR. Current systems handle clean hand-drawn annotations with 80-85% accuracy but struggle with heavily marked-up documents. Best practice is to incorporate hand-drawn changes into the CAD-generated P and ID before AI parsing.

Implicit engineering intent. Some information on a P and ID is implied rather than explicitly drawn. For example, a process engineer may know that a certain valve is always paired with a specific type of actuator even though only the valve body symbol appears on the drawing. These implicit conventions require domain knowledge that must be encoded as rules supplementing the visual parsing.

Legacy document quality. Older P and IDs scanned from paper originals may have poor resolution, faded lines, or artifacts from photocopying. Image preprocessing can mitigate some of these issues, but severely degraded documents may require human intervention to resolve ambiguities.

Research and development in this field is advancing rapidly. Recent work on transformer-based architectures for document understanding shows promise for handling complex layout structures. Graph neural networks are being applied to improve connectivity extraction accuracy by learning typical P and ID topology patterns. And active learning approaches allow systems to improve continuously by learning from corrections made by human engineers during review.

What Does This Mean for Equipment Design Engineers?

For design engineers working in semiconductor equipment, AI P and ID parsing represents a fundamental shift in workflow. Instead of spending hours manually interpreting diagrams and transcribing information into CAD systems, the engineer receives a structured digital representation of the P and ID within minutes of uploading the document.

This changes the engineers role from translator to reviewer. Rather than performing the mechanical work of reading every symbol and tracing every connection, the engineer reviews the AI-extracted data for accuracy, corrects any errors, and validates that implicit engineering intent has been correctly captured. This review-focused workflow is faster, less tedious, and produces more consistent results because the AI does not suffer from fatigue or attention lapses during the transcription process.

For companies evaluating AI P and ID parsing tools, the key questions are: What is the recognition accuracy on your specific symbol vocabulary? How does the system handle your company-specific symbol conventions? What is the process for correcting errors and feeding corrections back into the model? And how does the parsed output integrate with your downstream design tools, whether SolidWorks, CATIA, or an AI assembly generation platform like NeuroBox D?

The technology is ready for production use in semiconductor equipment design. The remaining accuracy gaps are addressable through company-specific fine-tuning, and the productivity gains from eliminating manual P and ID transcription are substantial and measurable.

Still designing assemblies manually?

NeuroBox D converts your P&ID into a complete SolidWorks assembly — in hours, not days. See how it works with your own designs.

Discover how MST deploys AI across semiconductor design, manufacturing, and beyond.