- →Why hand sketches still dominate

- →What sketch-to-CAD AI does

- →Where it works well

- →Where it struggles

- →A real example

A senior mechanical engineer sketches a custom fixture for a wafer-handling robot on a notebook page. Pencil, three views, dimensions in millimeters scribbled on the side. Total time: 4 minutes. The sketch goes to a CAD designer who spends the next two hours converting it into a SOLIDWORKS assembly. Then it comes back for review, gets one dimension wrong, and adds another 30 minutes.

This sequence is universal. Hand sketches dominate first-pass design because they are far faster than CAD. The bottleneck is the translation, not the design. AI is starting to compress the translation step, and this article walks through what working hand sketch to solidworks AI actually does, what it gets right, and what it does not.

Why hand sketches still dominate

It is tempting to assume that everyone designs in CAD now. They do not. In a 2024 survey of mechanical engineers at equipment OEMs, 81 percent reported that the first pass of a new fixture or sub-assembly was sketched by hand or on a tablet, then handed to a CAD designer for modeling. The reasons are well-known:

P&ID to SolidWorks in hours — 65% faster design cycles.

Choose how you'd like to connect:

- CAD setup overhead. Opening SOLIDWORKS, picking a template, setting up an assembly with mate references — minutes of overhead before any geometry exists.

- Sketch speed. A trained mechanical engineer sketches at 20 to 100x the rate they model. The bottleneck of CAD is the user interface, not the design.

- Iteration cost. Erasing and redrawing on paper is essentially free. Iterating in CAD has feature-tree consequences.

- Communication. A sketch with arrows and notes is faster to discuss across a desk than a CAD screen.

The sketch wins as the design medium. The CAD model wins as the deliverable. The translation between them is dead time.

What sketch-to-CAD AI does

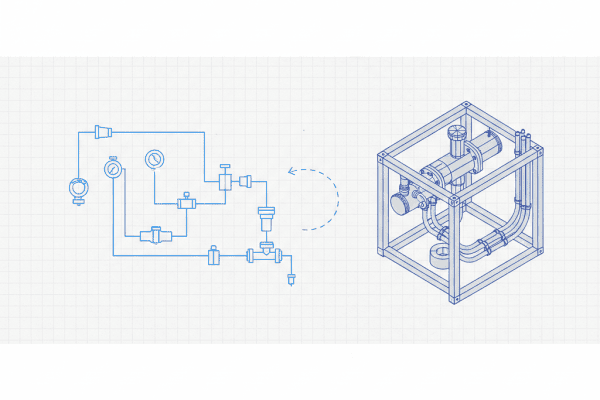

A modern hand sketch to solidworks pipeline does:

- Image acquisition. Photo of paper sketch or tablet drawing exported as image. Multi-view sketches accepted (front, top, side often on the same page).

- Sketch parsing. Detect view boundaries, identify orthographic projections, separate construction lines from object lines.

- OCR for dimensions and notes. Read dimension callouts, tolerance specs, material notes, surface finish symbols.

- Feature inference. From three orthographic views, infer the 3D feature decomposition: this region is an extrude, this is a revolve, this is a hole pattern.

- Parametric model generation. Produce SOLIDWORKS feature tree with sketches, features, and dimensions linked to the values from the hand sketch.

- Assembly inference (optional). If the sketch shows multiple parts, infer assembly structure and propose mate references.

The output is a native SOLIDWORKS .sldprt or .sldasm file, not a STEP. This is critical — see the earlier article on STEP/IGES limitations. A native parametric file is what enables further design iteration.

result = sketch_to_cad.convert(

image='fixture_sketch_2026-04-15.jpg',

output_format='solidworks_2024',

options={

'units': 'mm',

'tolerance_default': '+/- 0.1',

'material_default': 'AL6061-T6',

'create_drawing': True

}

)

print(f"Features extracted: {result.feature_count}")

print(f"Dimensions read: {result.dimension_count}")

print(f"Confidence: {result.overall_confidence}")

print(f"Manual review needed for: {result.flags}")

A realistic outcome on a clean three-view sketch with clear dimensions: feature_count around 8 to 25, dimension_count 12 to 40, overall_confidence 0.85 to 0.95, with 1 to 4 flags for manual review.

Where it works well

The pipeline works reliably when:

- The sketch has three orthographic views (front, top, side) with consistent scale.

- Dimensions are written in standard ISO/ASME format with arrows and witness lines.

- Major features are convex shapes (extrudes, revolves, simple cuts).

- The sketch is on plain or lined paper with reasonable contrast.

- Notes are in printed or block letters, not cursive.

- The geometry is not interpenetrating in ways that require complex Boolean reasoning.

Under these conditions, accuracy on geometric reconstruction is around 88 to 94 percent of features correctly placed and dimensioned, with the remaining cases needing engineer adjustment.

Where it struggles

Be specific about the failure modes:

- Perspective sketches. A single perspective view of a 3D object is genuinely ambiguous. The AI guesses; sometimes it guesses wrong.

- Partial views. A sketch showing only the front view assumes the engineer knows what the top and side look like. AI does not.

- Section views with conventions. Cross-hatching, hidden lines, broken-out sections all carry meaning that requires drafting convention knowledge.

- Hand-written tolerance stacks. “0.500 +0.002/-0.001” written in a hurry can be misread. OCR confidence drops.

- Conflicting dimensions. Hand sketches sometimes have over-defined or contradictory dimensions because the engineer was iterating. AI must flag these, not silently pick one.

- Sketches with construction notes. “DO NOT DEBURR THIS EDGE” written next to a feature is design intent that does not become a CAD geometric feature but must end up as a drawing note.

Most AI sketch-to-CAD failures are in these zones. They are tractable but not solved. A good tool flags ambiguity rather than silently choosing.

A real example

A wafer-handling fixture sketch from one project: pencil on graph paper, three views, dimensions in mm, 14 features visible. Manual SOLIDWORKS modeling took the original CAD designer 1 hour 50 minutes including back-and-forth with the engineer about ambiguous dimensions.

With AI conversion:

- Photo capture and upload: 2 minutes.

- AI processing: 90 seconds.

- Engineer review of generated model: 12 minutes (3 dimensions adjusted, 1 fillet added that AI missed, 2 mate references corrected).

- Total: 17 minutes.

This is a 6x time reduction on a single sketch. Across a project with 30 to 50 such fixtures, the savings compound into weeks.

Tablet sketching as a middle path

Many engineering teams now sketch on iPads or Surface tablets with apps like Concepts, Notability, or OneNote. The output is a vector or raster image that is cleaner than paper photos and is automatically backed up. AI processing of tablet sketches is more reliable than paper sketches because:

- No lighting or perspective distortion from photo capture.

- Consistent line quality.

- Optional layers separating geometry from notes.

- Time-stamped revisions automatically captured.

For teams adopting AI sketch-to-CAD seriously, moving from paper to tablet is the lowest-friction upgrade. The engineer still sketches; the medium is just digital from the start.

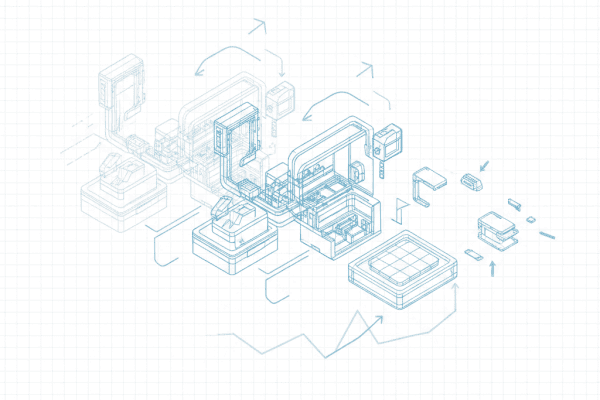

Where DrawingDiff fits

DrawingDiff’s sketch-to-CAD component pairs naturally with the other workflows in the same product: a sketch becomes a SOLIDWORKS assembly, the assembly is checked for compliance, the BOM is extracted, and revisions are diffed. The integration value is in keeping the same product handling the design from sketch to released drawing pack.

The drawing pack is the second deliverable

A SOLIDWORKS part is one deliverable; the production drawing pack is another. The fixture cannot go to manufacturing without a drawing showing dimensions, tolerances, surface finish, and material call-outs.

Good AI sketch-to-CAD pipelines generate the drawing alongside the part. The hand sketch already specifies the dimensions; the AI carries those into a SOLIDWORKS drawing with the same dimensional values, the same tolerance assumptions, and the same notes. The engineer reviews the drawing for layout (view selection, sheet space) but does not re-enter dimensions.

This matters because dimensioning a drawing manually takes longer than modeling the part. A 25-feature part takes 30 minutes to model and 60 minutes to dimension. If the AI can model and dimension together, the savings are larger than they look from the modeling step alone.

Sketch standards that improve AI accuracy

A simple internal practice doubles AI sketch-to-CAD accuracy: standardize how engineers sketch. Most teams have implicit conventions but no explicit ones. A short style guide changes outcomes meaningfully:

- Use three orthographic views consistently: front, top, side, in standard third-angle or first-angle projection.

- Keep all dimensions in one unit system per sketch. Mixing inches and millimeters confuses both humans and AI.

- Print dimension values, do not write in cursive.

- Use witness lines and arrowheads on dimensions.

- Indicate hole patterns with a clear callout (4X Ø6 THRU rather than four separate dimensions).

- Note material and finish in a consistent location, e.g., bottom-right corner.

- Mark uncertain dimensions with a question mark rather than guessing a value.

These conventions take 15 minutes to teach and pay back permanently. AI accuracy on sketches following these conventions is 5 to 10 percentage points higher than on free-form sketches. The biggest gain is in dimension recognition, which is where most manual rework happens.

When AI gets the topology wrong

The failure mode that requires the most engineer attention is topological misinterpretation. The AI generates a model that is dimensionally correct but has the wrong feature decomposition — a pocket where there should be a step, a fillet where there should be a chamfer, or two features merged that should be separate.

The fix is usually quick (under a minute per error) but it requires the engineer to actually inspect the feature tree, not just the visual geometry. This is a workflow discipline issue more than an AI capability issue. The teams that get the most out of hand sketch to solidworks AI are the ones that treat the feature tree review as part of the standard QA, not optional.

A practical heuristic: if you cannot rebuild the part by changing one dimension and getting a sensible result, the topology is wrong. Fix it before committing the part to PDM.

What this means for you

- Hand sketches are not going away. The right question is how to compress the sketch-to-CAD step, not how to eliminate the sketch.

- Tablet sketching is an under-rated upgrade. It improves AI accuracy and team workflow at low cost.

- Treat AI sketch conversion output as a starting point — the engineer reviews and adjusts in the same SOLIDWORKS session.

- Pilot on standard fixture types you make often. The AI improves with examples from your specific design vocabulary, and a short sketch style guide raises hand sketch to solidworks accuracy meaningfully.

NeuroBox D generates native SolidWorks 3D assemblies from P&ID in 4 hours. Auto BOM, zero errors.

Book a Demo →See how NeuroBox reduces trial wafers by 80%

From Smart DOE to real-time VM/R2R — our AI runs on your equipment, not in the cloud.

Book a Demo →