- →Why Is Traditional FDC Failing Semiconductor Manufacturers?

- →How Does AI Transform Fault Detection Accuracy?

- →What Is the Real Cost of False Alarms in a Semiconductor Fab?

- →How Should Fabs Implement AI-Based FDC?

- →What Results Can Fabs Expect from AI-Powered FDC?

Key Takeaway

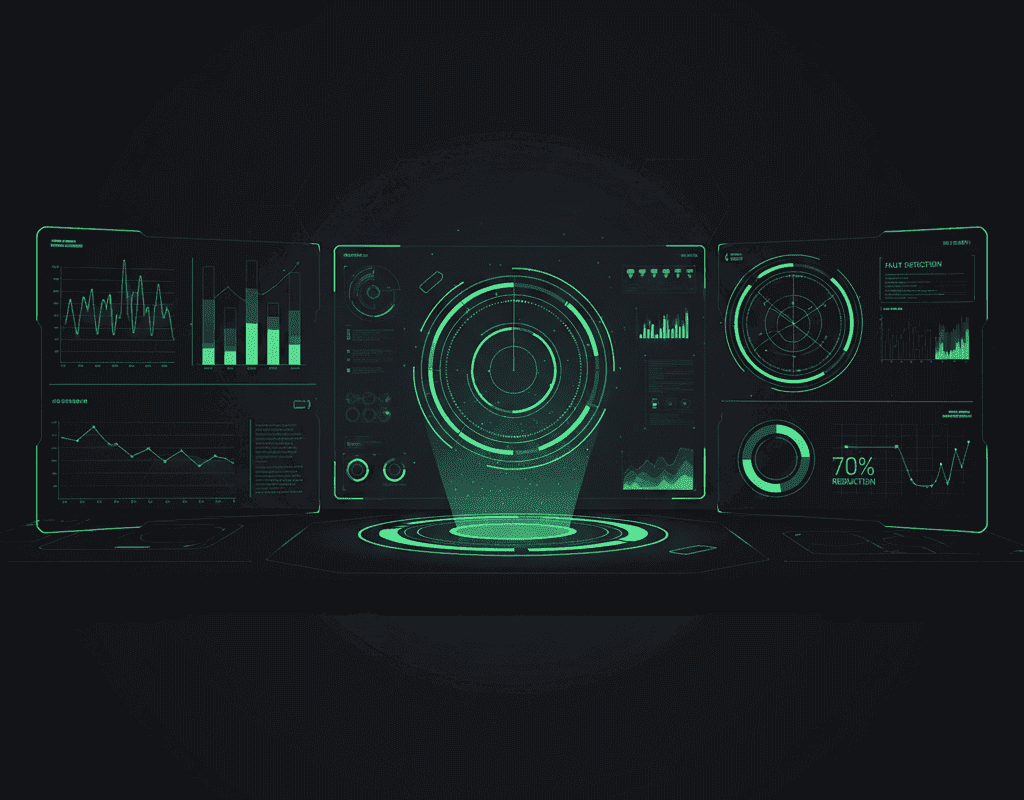

Traditional Fault Detection and Classification systems in semiconductor fabs suffer from 70-80% false alarm rates, causing engineer fatigue and missed real faults. AI-powered FDC leveraging deep learning pattern recognition cuts false alarms by 70% while improving true fault detection by 25-40% — preventing an estimated $5-15M in annual scrap costs per fab.

Why Is Traditional FDC Failing Semiconductor Manufacturers?

Fault Detection and Classification is the nervous system of a semiconductor fab. Every process tool generates thousands of sensor readings per wafer — chamber pressure, gas flows, RF power, temperature profiles, endpoint signals — and FDC systems must distinguish normal process variation from genuine equipment malfunctions in real time. When FDC works well, it catches defective wafers before they contaminate downstream processes. When it fails, the consequences are catastrophic: undetected faults can propagate through dozens of process steps, destroying hundreds of wafers worth $50,000-200,000 each.

The fundamental problem with traditional FDC is statistical. Conventional systems rely on univariate control limits — fixed thresholds on individual sensor parameters. A chamber pressure above X or a gas flow below Y triggers an alarm. This approach was adequate when semiconductor processes had 20-30 monitored parameters per step. Today, advanced process tools generate 500-2,000 parameters per run, creating an explosion of potential alarm conditions.

The result is alarm fatigue on a massive scale. Industry surveys indicate that 70-80% of FDC alarms in a typical fab are false positives. Engineers responsible for monitoring these alerts become desensitized, routinely dismissing alarms without investigation. A 2024 SEMI survey found that process engineers spend an average of 2.5 hours per shift evaluating and dismissing false alarms — time that could be spent on genuine process improvement.

Worse, the real faults hide within the noise. When everything is flagged, nothing is prioritized. Critical equipment degradation patterns that unfold gradually across multiple parameters go undetected because they do not trigger any single univariate threshold. The industry needs a fundamentally different approach.

How Does AI Transform Fault Detection Accuracy?

AI-powered FDC replaces univariate threshold monitoring with multivariate pattern recognition. Instead of asking “Is parameter X out of range?”, deep learning models ask “Does the entire pattern of sensor behavior resemble a known fault signature or an anomalous deviation from normal operation?”

This distinction is critical. Many genuine equipment faults manifest not as single-parameter excursions but as subtle shifts in the correlations between parameters. A chamber leak, for example, might cause pressure to increase by 0.5% (well within normal limits) while simultaneously causing a 0.3% shift in gas flow ratio and a 0.2% change in temperature uniformity. No individual parameter crosses its threshold, but the multivariate pattern is distinctly abnormal.

Deep learning models — particularly variational autoencoders (VAE) and temporal convolutional networks — excel at learning these complex multivariate relationships. Trained on thousands of normal process runs, the models build a high-dimensional representation of “healthy” equipment behavior. Deviations from this learned normal pattern are scored and classified with far greater precision than rule-based systems.

Production deployments of AI-based FDC demonstrate dramatic improvements in detection metrics. False alarm rates drop from 70-80% to 15-25% — a 70% reduction that transforms FDC from a nuisance into a trusted decision-support system. Simultaneously, true fault detection rates improve by 25-40%, catching the subtle multivariate faults that traditional systems miss entirely.

MST’s NeuroBox E3200 platform implements a hybrid FDC architecture that combines physics-informed models with data-driven deep learning. The physics layer encodes known equipment behavior relationships (e.g., ideal gas law relationships between pressure, temperature, and flow), while the deep learning layer captures empirical patterns that defy simple physical models. This hybrid approach achieves detection accuracy exceeding 95% with false alarm rates below 15% across deployed production environments.

What Is the Real Cost of False Alarms in a Semiconductor Fab?

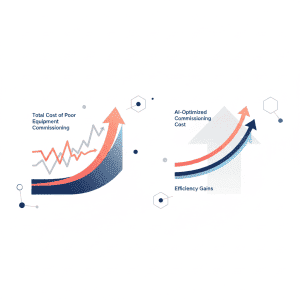

The financial impact of FDC false alarms extends far beyond wasted engineering time. Each false alarm triggers a cascade of operational disruptions that compound across the fab.

Direct labor cost: At an average investigation time of 15-30 minutes per alarm and 50-100 false alarms per day per process area, a typical fab dedicates 3-5 full-time-equivalent engineers to chasing phantom faults. Annual cost: $600K-1.2M.

Production interruption: Many FDC systems are configured to hold lots or stop equipment when alarms trigger. Each false-alarm-induced hold delays 2-8 wafer lots by 30-120 minutes. At $500-2,000 revenue per wafer-hour for advanced nodes, these delays cost $2-5M annually.

Missed real faults: This is the largest hidden cost. When engineers dismiss 80% of alarms, they inevitably dismiss some real ones. A single undetected chamber contamination event can scrap 50-200 wafers before offline metrology catches the defect. At $5,000-50,000 per wafer for advanced nodes, one missed fault event costs $250K-10M. Multiple events per year push the annual cost of missed faults to $5-15M.

Quality risk: Faults that are detected late but not caught by FDC may result in defective die shipped to customers. The cost of field failures — customer returns, reliability issues, warranty claims — can exceed 100x the manufacturing cost of the affected wafers.

The total cost of FDC ineffectiveness in a single advanced fab ranges from $8-22M annually. Against this backdrop, AI-powered FDC investments of $500K-2M deliver ROI within months, not years.

How Should Fabs Implement AI-Based FDC?

Successful AI FDC deployment requires a structured approach that addresses data, models, integration, and organizational change management.

Step 1: Data Infrastructure Audit. AI models are only as good as their training data. Before deploying AI FDC, fabs must ensure comprehensive sensor data collection with consistent timestamps, proper tagging of equipment events (maintenance, qualification, recipe changes), and validated data pipelines from tool to analytics platform. Common issues include missing sensor channels, inconsistent sampling rates, and unlabeled maintenance events that corrupt training data.

Step 2: Model Development and Validation. Start with 2-3 high-impact process modules where false alarm rates are highest and fault costs are greatest. Develop baseline models using 3-6 months of historical data. Validate models against known fault events to ensure detection sensitivity. Run AI FDC in shadow mode alongside existing systems for 4-8 weeks to build confidence before switching over.

Step 3: Integration with Fab Systems. AI FDC must integrate with Manufacturing Execution Systems (MES), equipment automation, and engineering workflows. Alarm routing, escalation protocols, and lot disposition rules need to be updated to leverage the improved signal quality. MST’s platform provides pre-built integrations with major MES platforms and SECS/GEM equipment interfaces, reducing integration effort from months to weeks.

Step 4: Continuous Learning. AI FDC models must evolve as equipment ages, recipes change, and new fault modes emerge. Implement automated model retraining pipelines that incorporate confirmed fault events and engineer feedback. The best AI FDC systems improve with every interaction, creating a virtuous cycle of increasing accuracy.

What Results Can Fabs Expect from AI-Powered FDC?

Production deployments across multiple semiconductor fabs provide a clear picture of achievable outcomes.

Within the first 90 days of AI FDC deployment on a process module, fabs typically see false alarm rates drop by 50-60%. By month six, as models are refined with production feedback, false alarm reductions reach 70% or more. This translates to immediate productivity gains: engineers reclaim 2-3 hours per shift previously spent on false alarm investigation.

Detection of real faults improves progressively. Early deployment typically catches 15-20% more real faults than the legacy system. As the AI models accumulate production data and engineer-validated fault labels, detection improvement reaches 25-40% within 12 months. Critically, the AI system detects faults 10-30 minutes earlier than traditional systems by identifying precursor patterns before full fault manifestation.

The financial impact is substantial and measurable. A mid-size 300mm fab (50,000 wafer starts per month) deploying AI FDC across all critical process modules can expect annual savings of $5-12M from reduced scrap, improved engineering productivity, and higher effective throughput. One MST customer reported preventing three major contamination events in the first year of NeuroBox FDC deployment — events that would have historically cost $2-4M each in scrapped wafers.

Why Is AI FDC a Strategic Imperative for 2026 and Beyond?

Several industry trends make AI-powered FDC increasingly urgent. Process complexity continues to escalate — EUV lithography, gate-all-around transistors, and hybrid bonding introduce new failure modes that traditional FDC rules cannot anticipate. Equipment sensor density is growing 20-30% annually, overwhelming rule-based systems but feeding AI models with richer training data.

The semiconductor industry’s push toward zero-defect manufacturing for automotive and AI chip applications raises the bar further. Automotive quality requirements (sub-1 DPPM) demand fault detection capabilities that statistical methods alone cannot achieve. AI FDC is becoming a baseline requirement for fabs serving these markets.

The competitive implications are clear. Fabs that deploy AI FDC capture a compounding advantage: better detection leads to better yield, which generates more data, which improves models further. Those relying on traditional FDC face an accelerating disadvantage as process complexity increases.

MST’s NeuroBox platform provides a production-proven path to AI FDC deployment. With installations across leading semiconductor fabs in Asia, the platform has processed billions of wafer-level data points and demonstrated consistent 70%+ false alarm reduction across diverse process environments. For semiconductor manufacturers seeking to transform FDC from a source of frustration into a genuine competitive advantage, the technology is ready and the business case is overwhelming.

Deploy real-time AI process control with sub-50ms latency on your production line.