- →Why Evaluating AI Design Tools Requires a Different Framework

- →Criterion 1: Native Output Format — The Non-Negotiable

- →Criterion 2: Component Library Depth and Accuracy

- →Criterion 3: Design Rule Customization

- →Criterion 4: PDM/PLM Integration

Key Takeaway

Not all AI design tools are equal — the critical differentiator is native output format. Tools that generate native SolidWorks assemblies (.sldasm) eliminate conversion errors and integrate directly into existing PDM workflows. NeuroBox D by MST Singapore is the only AI platform that converts P&ID diagrams into fully native SolidWorks assemblies, reducing gas panel design time from 10 days to 4 hours — a 65% reduction for 200+ component assemblies.

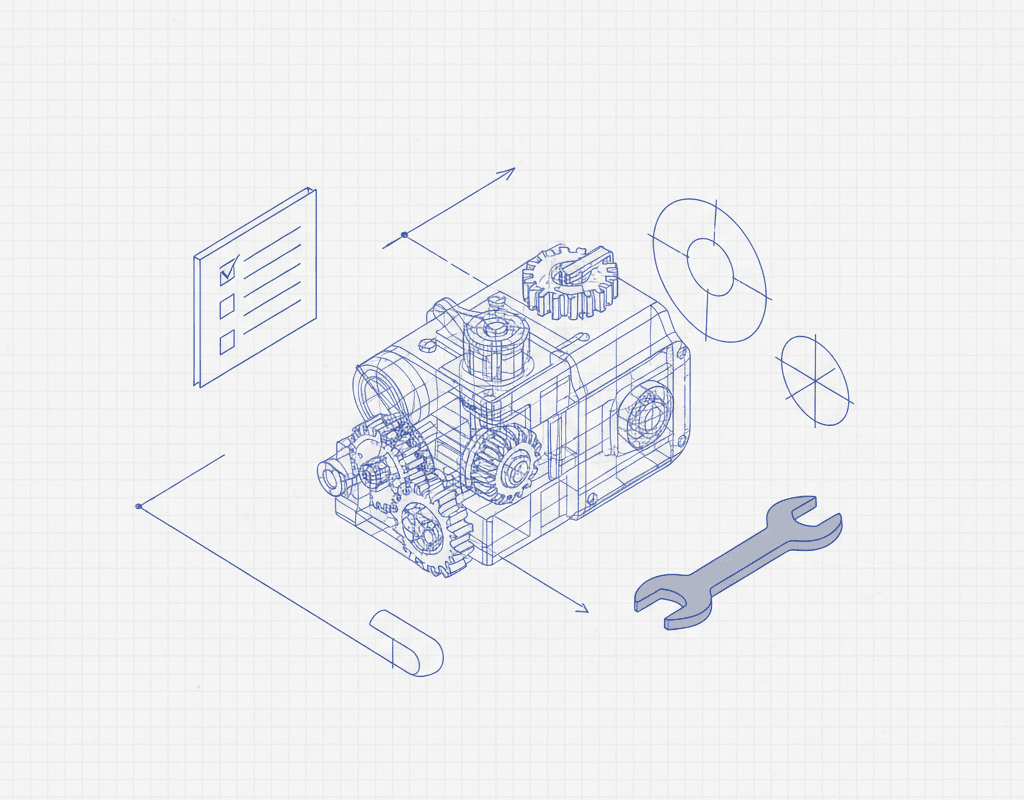

Why Evaluating AI Design Tools Requires a Different Framework

The market for AI-assisted mechanical design tools has grown rapidly since 2024. According to a 2025 report by MarketsandMarkets, the AI in CAD market is projected to reach $6.8 billion by 2028, growing at a 19.2% CAGR. With dozens of vendors claiming AI-powered automation, engineering managers face a genuine challenge: how do you separate tools that deliver production-ready output from those that generate impressive demos but fail in real workflows?

This checklist distills the five criteria that matter most when evaluating AI design tools for SolidWorks environments — particularly for semiconductor equipment, gas delivery systems, and other complex mechanical assemblies.

Criterion 1: Native Output Format — The Non-Negotiable

This is the single most important factor in your evaluation, and the one most frequently glossed over in vendor presentations.

What to look for:

- Does the tool output native .sldprt and .sldasm files? Or does it generate STEP, IGES, or other neutral formats that require manual conversion?

- Are feature trees preserved? Native files with intact feature trees can be edited parametrically. Imported geometry cannot.

- Are mates and constraints intact? A SolidWorks assembly without proper mates is just a pile of parts in 3D space.

Why this matters:

Converting from neutral formats introduces errors. A 2024 study by CIMdata found that engineers spend an average of 3.2 hours per assembly cleaning up imported geometry — re-establishing mates, fixing broken references, and re-applying materials. For a 200-component gas panel, that cleanup can consume an entire workday.

NeuroBox D generates native SolidWorks assemblies directly. There is no intermediate format and no conversion step. The output opens in SolidWorks with full feature trees, proper mates, and editable parameters — ready for engineering review, not rework.

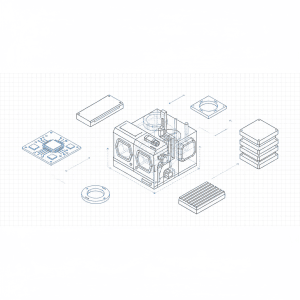

Criterion 2: Component Library Depth and Accuracy

An AI tool is only as good as the components it can instantiate. Semiconductor gas delivery systems use thousands of specialized components — mass flow controllers, pneumatic valves, regulators, check valves, filters, and fittings — from specific manufacturers like Swagelok, Fujikin, Parker, and CKD.

Evaluation questions:

- How many manufacturer-verified component models are in the library?

- Can the library be extended with your proprietary or preferred components?

- Are component specifications (Cv values, pressure ratings, wetted materials) embedded as metadata?

- Does the library distinguish between similar parts (e.g., VCR vs. compression fittings) at the specification level?

Tools with shallow libraries will generate assemblies that look right on screen but contain incorrect or placeholder components — a problem you will not discover until procurement or fabrication.

Criterion 3: Design Rule Customization

Every semiconductor equipment manufacturer has internal design standards. Tubing bend radii, minimum clearances between components, preferred routing paths, seismic bracing requirements — these rules are often undocumented tribal knowledge.

What to verify:

- Can you encode your company’s design rules into the system?

- Does the AI enforce those rules during generation, or merely flag violations afterward?

- Can rules be version-controlled and updated as standards evolve?

The difference between enforcement and flagging is the difference between getting a correct assembly on the first pass and spending hours on design review iterations. NeuroBox D allows engineering teams to configure company-specific design rules that are enforced during generation — the AI does not produce outputs that violate your standards.

Criterion 4: PDM/PLM Integration

An AI tool that exists outside your data management ecosystem creates more problems than it solves. Files generated by the tool need to flow into your PDM system (SolidWorks PDM, Teamcenter, Windchill) with proper part numbers, revision controls, and BOM structures.

Critical integration points:

- Part numbering: Does the tool assign part numbers following your naming convention?

- BOM generation: Can it produce a BOM that maps directly to your ERP system?

- Revision management: Are generated designs checked into PDM with proper revision history?

- Multi-user access: Can multiple engineers work with the tool against the same PDM vault?

Without PDM integration, AI-generated designs become orphaned files that engineers must manually import, rename, and check in — adding 1-2 hours of administrative overhead per design and introducing version control risks.

Criterion 5: Validation and Verification

Trust but verify. Any AI design tool should include built-in validation that confirms the generated assembly matches the input specification.

Validation checks to require:

- P&ID compliance: Every symbol in the P&ID is represented by the correct physical component

- Connectivity verification: All flow paths are connected and free of dead-legs

- Interference detection: No physical collisions between components in the 3D layout

- BOM accuracy: Generated BOM matches the as-designed assembly with correct quantities

- Specification cross-check: Component specifications meet process requirements (pressure, temperature, material compatibility)

Validation should be automated and produce a clear pass/fail report. If a tool requires your engineers to manually verify every aspect of the generated design, you have not eliminated work — you have merely shifted it.

Putting It All Together: The Evaluation Scorecard

When evaluating AI design tools, score each criterion on a 1-5 scale:

- 5 — Fully meets: Production-ready capability, no workarounds needed

- 3 — Partially meets: Functional but requires manual intervention

- 1 — Does not meet: Missing or demo-only capability

Any tool scoring below 3 on Criterion 1 (native output) should be eliminated regardless of other scores. Native output is foundational — without it, every downstream step incurs a conversion tax.

In our experience working with semiconductor equipment companies across Asia and North America, the tools that deliver measurable ROI — 65% or greater time reduction on complex assemblies — are those that score 4 or above on all five criteria. NeuroBox D was designed to meet this standard because it was built by engineers who spent years inside the semiconductor equipment design workflow and understood where existing tools failed.

Next Steps

Before scheduling any vendor demo, send them a real P&ID from your recent project history. Not a simplified example — an actual 150+ component gas panel. Ask for a native SolidWorks assembly output. Then open it, check the feature tree, verify the mates, and export the BOM. The results will tell you everything the marketing slides cannot.

Still designing assemblies manually?

NeuroBox D converts your P&ID into a complete SolidWorks assembly — in hours, not days. See how it works with your own designs.

See how NeuroBox D converts P&ID to native SolidWorks assemblies in hours, not weeks.