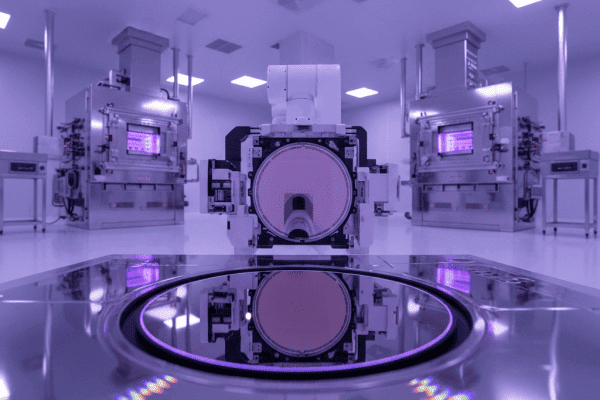

- →1. Cpk logic in the single-chamber era

- →2. Three common calculation errors in multi-chamber scenarios

- →3. Three correct approaches for multi-chamber

- →4. Where AI helps in multi-chamber Cpk monitoring

- →5. Real case: a CVD tool's Cpk rescue

Key Takeaways

In multi-chamber parallel configurations, naively pooling all chamber data to compute one Cpk seriously underestimates process capability. Typical example: a 4-chamber CVD tool where each chamber has Cpk = 1.67 individually — but the pooled Cpk drops to about 1.20 because chamber-to-chamber variation adds extra variance. This article breaks down three correct ways to compute multi-chamber Cpk, matching criteria from an engineering perspective, and how AI helps catch “one chamber drifting but still within spec” patterns that human monthly reports miss.

1. Cpk logic in the single-chamber era

The classical process capability index formula is simple: Cpk = min((USL-μ)/3σ, (μ-LSL)/3σ). When a process runs on a single chamber, you plug in μ (process mean) and σ (process standard deviation) directly. Typical thresholds: Cpk ≥ 1.33 acceptable, ≥ 1.67 excellent, ≥ 2.0 world-class.

Smart DOE reduces trial wafers by 80%. See how it works for your equipment.

Choose how you'd like to connect:

This works fine for a single chamber or single tool. In modern fabs with multi-chamber parallel architectures, things get more complicated.

2. Three common calculation errors in multi-chamber scenarios

Modern process tools increasingly use multi-chamber parallel designs — 2–6 chambers per CVD tool, 4–8 per etch cluster is now common. In this context, Cpk is commonly miscomputed three ways:

Error 1: Simply pooling all chamber data into one Cpk

The most common error. Concatenate thickness data from 4 chambers, compute μ and σ, plug into the formula. The problem: this σ includes both “between-chamber variance” and “within-chamber variance.” Cpk’s physical meaning should reflect only “intrinsic process variation” — not the extra variance introduced by imperfect chamber matching.

Typical numbers: within-chamber σ = 2.5 Å, between-chamber mean σ = 3.5 Å → pooled σ ≈ √(2.5² + 3.5²) ≈ 4.3 Å. With spec ±20 Å, single-chamber Cpk = 20/(3×2.5) = 2.67; pooled Cpk = 20/(3×4.3) = 1.55. A “world-class” process gets reported as merely “acceptable.”

Error 2: Reporting Cpk from only the best chambers

The opposite mistake — cherry-pick the well-matched chambers, compute Cpk, report as overall. Common during qualification and audits. The result: Cpk looks great on paper, but customers doing incoming inspection on real multi-chamber output frequently see out-of-spec lots.

Error 3: Using overall σ as within σ

Some teams conflate Cpk and Pp (process performance) — use all-data σ (which is σ overall) but plug it into the Cpk formula. Strictly this yields Ppk, not Cpk. Customers auditing your data package will catch this immediately as non-compliant.

3. Three correct approaches for multi-chamber

Approach A: Per-chamber Cpk, report the minimum

The most conservative and common approach — compute Cpk per chamber, report min(Cpk₁, Cpk₂, …, Cpkₙ) as the tool’s Cpk. Pros: transparent, no statistical tricks. Cons: ignores systemic bias between chambers.

Best for: customer acceptance, early process development, chamber count ≤ 4.

Approach B: Within-chamber Cpk + explicit chamber matching report

Separate Cpk from matching: compute Cpk using pooled within-chamber σ, and express matching via chamber mean range (e.g., “max mean difference < 5 Å”).

This is the SEMI E10 / SEMI E35 recommended practice for equipment qualification. Pros: most complete data picture — truly reflects “how stable is the process” and “how consistent across chambers” as independent dimensions. Cons: customers must understand both metrics.

Approach C: ANOVA-based variance decomposition

Use Variance Components Analysis to decompose total variance into: chamber-to-chamber, wafer-to-wafer, within-wafer components. Then evaluate Cpk per source.

This is the most rigorous approach with the strongest academic backing. But implementation is demanding — requires engineers familiar with variance components in JMP / Minitab, plus structured DOE sampling. Suitable for R&D-stage process characterization, less so for daily SPC reporting.

4. Where AI helps in multi-chamber Cpk monitoring

Traditional SPC reports are post-hoc — weekly or monthly Cpk reports. In multi-chamber scenarios, what really matters is catching in real time that “a chamber has started drifting but individually still passes Cpk.” This pattern is exactly what AI monitoring is suited for.

Three things AI adds

1. Early detection of chamber-matching drift. When a 4-chamber tool’s matching slowly degrades from “max mean diff 3 Å” to “6 Å,” individual chamber Cpk may still be fine, but overall process capability has dropped. AI tracks matching metrics in real time, catching drift 2–4 weeks before monthly human reports.

2. Chamber-specific root cause analysis. When matching worsens, AI correlates other process parameters (RF power, gas flow, temperature) per chamber to isolate “why did Chamber 3 drift?” — is it heater aging, MFC drift, O-ring degradation? This multi-dimensional correlation is beyond manual analysis.

3. Predictive PM scheduling. Based on chamber-specific matching trends, forecast “Chamber 3 will need PM in 10–15 days,” letting customers schedule maintenance while Cpk is still compliant — avoiding tool-level qualification failures caused by chamber drift.

5. Real case: a CVD tool’s Cpk rescue

Illustrative case from a 4-chamber CVD tool (anonymized, not a real customer project).

Initial state: per-chamber Cpk = 1.71 / 1.68 / 1.65 / 1.63; pooled Cpk = 1.24.

Customer acceptance standard: “overall Cpk ≥ 1.33.” By the pooled method, the tool failed. The OEM’s first reaction: “retune process parameters.” This is the wrong direction.

The correct diagnosis: chamber-to-chamber mean σ_between = 3.2 Å, while within σ_pooled was only 2.4 Å. The problem isn’t the process — it’s the matching across 4 chambers.

The fix: apply chamber-specific offset compensation — in the recipe, give each chamber a small parameter offset (e.g., Chamber 3’s deposition time +0.8 seconds) to align chamber means. After adjustment, σ_between dropped to 1.1 Å, and pooled Cpk rose to 1.58.

The key insight: “improving Cpk” is not always about changing the process — it may be about changing chamber matching. Only after variance decomposition can you pick the right remedy.

6. Recommendations for engineering teams

Recommendation 1: Always report Cpk with a sample specification. “Cpk = 1.67” is ambiguous in multi-chamber context — you must state the algorithm (single-chamber worst / pooled overall / pooled within). Standardize this in both customer communication and internal audits.

Recommendation 2: Introduce variance components thinking early. You don’t need ANOVA every time, but engineers must habitually ask “where is this variance coming from?” Tools and training should follow — JMP’s Variance Components feature isn’t hard to use.

Recommendation 3: Monitor matching and Cpk separately. Multi-chamber tool health has two independent dimensions: process variation (Cpk) and inter-chamber consistency (matching). Collapsing them into one number hides where the real problem is.

Recommendation 4: Use AI for real-time trends, not post-hoc reports. Traditional SPC reports’ value is being eroded by real-time AI monitoring. This is especially true for multi-chamber — catching matching drift 2 weeks earlier prevents a customer escape.

If you handle multi-chamber Cpk problems in a process or APC team, NeuroBox E3200 provides chamber-specific matching tracking and variance decomposition on top of existing SPC, adding “2–4 week earlier drift detection” capability. Book a 30-minute technical review.

Related reading

- What Is Cpk? Process Capability Analysis for Semiconductor Manufacturing

- Process Window Analysis: Finding Optimal Operating Conditions with AI

- SPC & OOC Analysis: Statistical Process Control for Semiconductor Equipment

NeuroBox E3200 replaces metrology wait with real-time VM prediction. Control parameters auto-adapt based on prediction confidence. No manual lambda tuning.

Book a Demo →See how NeuroBox reduces trial wafers by 80%

From Smart DOE to real-time VM/R2R — our AI runs on your equipment, not in the cloud.

Book a Demo →