- →Why Does Virtual Metrology Model Selection Matter?

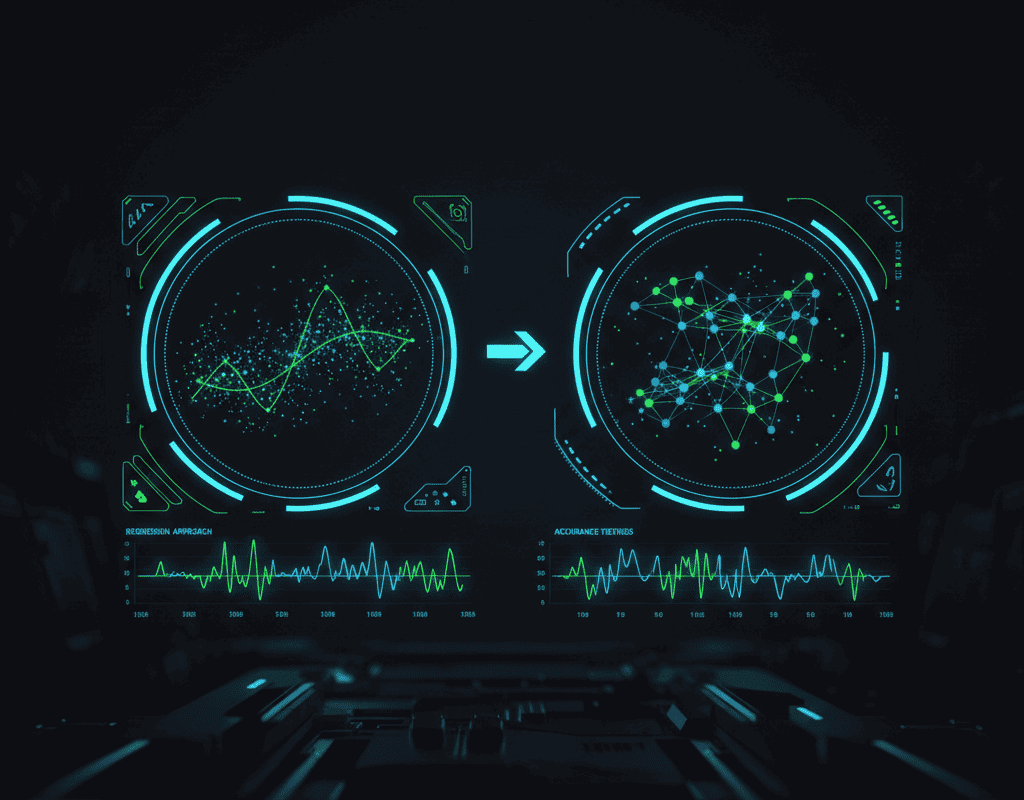

- →How Well Does Linear Regression Perform for VM?

- →What Can Deep Learning Achieve for VM?

- →Why Do Hybrid Models Consistently Win in Practice?

- →How Should You Benchmark VM Models Before Production Deployment?

Key Takeaway

Linear regression VM models offer simplicity and interpretability with R-squared values of 0.75-0.85 for simple processes. Deep learning models achieve R-squared of 0.90-0.97 on complex multi-step processes but require 10x more training data and offer less transparency. Hybrid models that combine physics-based feature engineering with gradient-boosted trees consistently deliver the best practical trade-off: R-squared of 0.88-0.95 with 3-5x less data than deep learning. NeuroBox supports all three approaches and recommends the right method based on available data and process complexity.

Why Does Virtual Metrology Model Selection Matter?

Virtual Metrology (VM) — predicting wafer quality from equipment sensor data without physical measurement — has moved from academic concept to production necessity. The ITRS roadmap identifies VM as a key enabler for reducing metrology sampling rates from 100% to 10-25% while maintaining quality oversight, potentially saving fabs $2M-$10M annually in metrology tool costs and throughput gains.

But VM is only as good as its underlying prediction model. A poorly chosen model architecture leads to inaccurate predictions, false alarms, and — worst of all — missed excursions that result in scrapped wafers. The choice between linear regression, deep learning, and hybrid approaches should be driven by data characteristics, process complexity, and operational requirements rather than technology trends.

How Well Does Linear Regression Perform for VM?

Linear regression (including its extensions: Ridge, Lasso, Partial Least Squares) was the first widely deployed VM approach and remains relevant for specific applications:

Performance Profile: For single-step processes with 20-50 relevant sensor parameters and approximately linear relationships between process conditions and outcomes (e.g., thermal oxidation thickness, simple PVD film thickness), linear models achieve R-squared values of 0.75-0.85 and mean absolute prediction errors of 2-5% relative to physical measurement.

Data Requirements: Linear models can be trained on 50-200 wafers of labeled data (wafers with both sensor data and metrology measurements). Regularized variants like Lasso automatically select the most important features, reducing overfitting risk.

Interpretability: Each coefficient has a direct interpretation — “a 1% increase in RF power corresponds to a 0.3nm increase in etch depth.” Process engineers can validate and refine models by examining coefficients against their physical intuition.

Limitations: Linear models fundamentally cannot capture non-linear process behavior. When etch rates saturate at high power, when deposition uniformity depends on complex gas flow interactions, or when multi-step processes create cascading effects, linear models plateau in accuracy. They also cannot capture temporal dependencies — degradation trends across sequential wafer runs are invisible to static linear models.

Best Use Cases: Mature, well-controlled processes with limited parameter interactions; early VM deployments where data is scarce; processes requiring full model interpretability for regulatory or customer compliance.

What Can Deep Learning Achieve for VM?

Deep learning architectures — Convolutional Neural Networks (CNNs), Long Short-Term Memory networks (LSTMs), Transformers, and autoencoders — represent the state of the art in VM accuracy:

Performance Profile: On complex multi-step processes (e.g., predicting final electrical test parameters from 20+ process steps), deep learning models achieve R-squared values of 0.90-0.97 and mean absolute errors of 0.5-2%. For processes with strong temporal dependencies (chamber conditioning effects, target erosion trends), LSTM-based VM models outperform static models by 15-30%.

Data Requirements: Deep learning’s accuracy comes at a cost: training typically requires 1000-5000+ labeled wafers, and models need continuous retraining as processes drift. This data requirement can be prohibitive for low-volume or new processes.

Architecture Options: 1D-CNNs process sensor time-series traces from individual wafer runs, capturing within-run dynamics. LSTMs track wafer-to-wafer temporal trends, detecting degradation patterns. Autoencoders learn compressed representations of normal process behavior, flagging anomalies when reconstruction error exceeds thresholds. Transformer architectures, emerging in semiconductor applications, handle variable-length sequences and multi-tool dependencies elegantly.

Limitations: Deep learning models are black boxes. When a model predicts a wafer will be out of spec, engineers cannot easily determine which process parameters drove the prediction. This lack of interpretability creates trust issues in production environments where engineers must approve or override model recommendations. Training instability, overfitting risk, and computational requirements for inference (though modern models run in milliseconds on edge hardware) are additional considerations.

Best Use Cases: Complex, multi-step processes with abundant training data; processes with strong temporal dependencies; applications where prediction accuracy is more critical than interpretability.

Why Do Hybrid Models Consistently Win in Practice?

Hybrid models that combine domain-driven feature engineering with modern ML algorithms have emerged as the practical sweet spot in production semiconductor VM:

Architecture: The hybrid approach starts with physics-motivated feature engineering — calculating meaningful derived features like plasma power density (RF power / electrode area), gas residence time (chamber volume / total flow rate), Arrhenius temperature terms (exp(-Ea/kT)), and wafer-to-wafer delta features that capture drift. These engineered features encode domain knowledge that raw sensor values do not directly express.

These features then feed into gradient-boosted tree models (XGBoost, LightGBM) or shallow neural networks (2-3 layers). The feature engineering handles the domain complexity, allowing the ML algorithm to focus on learning residual patterns from a pre-structured input space.

Performance Profile: Hybrid models consistently achieve R-squared values of 0.88-0.95 across a wide range of semiconductor processes. They require only 200-500 labeled wafers for training — 3-5x less than deep learning — while delivering accuracy within 2-5% of deep learning performance.

Practical Advantages: Feature importance from gradient-boosted trees is naturally interpretable — engineers can see which physics-based features drive predictions. The models train in minutes rather than hours, enabling rapid iteration. They are robust to small data shifts and degrade gracefully when process conditions change, rather than failing catastrophically as deep learning models sometimes do.

NeuroBox’s VM engine implements this hybrid approach as its default model architecture. The platform’s automated feature engineering module generates 50+ physics-motivated features from raw SECS/GEM sensor data, then trains ensemble models with built-in cross-validation and feature selection. Process engineers can add custom features based on their domain expertise through a no-code interface.

How Should You Benchmark VM Models Before Production Deployment?

Regardless of model architecture, production VM deployment requires rigorous benchmarking:

Prediction Accuracy (R-squared, MAE, RMSE): The baseline metric. Compare model predictions against physical metrology measurements on a held-out test set. Industry targets vary by application: critical dimension VM typically requires R-squared > 0.90, while film thickness VM may be acceptable at 0.85+.

Excursion Detection Rate: More important than average accuracy is the model’s ability to detect out-of-spec wafers. Measure true positive rate and false positive rate at your operational threshold. A VM model that is 95% accurate on average but misses 30% of excursions is dangerous. Target: >95% excursion detection with <5% false alarm rate.

Stability Over Time: Evaluate model accuracy degradation over weeks and months. All models drift as equipment ages and processes shift. Measure how quickly accuracy degrades and how frequently retraining is needed. Stable models maintain acceptable accuracy for 4-8 weeks between retraining cycles.

Inference Latency: For real-time applications (R2R control using VM predictions), inference must complete in under 100ms. Linear models run in microseconds, gradient-boosted trees in 1-5ms, and deep learning models in 5-50ms on edge hardware.

Training Data Efficiency: How many labeled wafers are needed to achieve target accuracy? This directly impacts deployment cost and time, especially for processes where metrology data is expensive to acquire.

What Is the Recommended Starting Point for VM Deployment?

For organizations beginning their VM journey, a phased approach minimizes risk:

Phase 1 — Baseline with Hybrid Models: Start with the hybrid approach (physics-based features + gradient-boosted trees). This delivers strong accuracy with moderate data requirements and provides interpretable results that build engineering trust. NeuroBox’s automated model builder generates initial hybrid models within days of data collection.

Phase 2 — Add Temporal Intelligence: Once baseline VM models are deployed and validated, add LSTM or transformer layers for processes showing significant wafer-to-wafer temporal patterns. This typically improves excursion detection by 10-20% for processes with strong chamber conditioning effects.

Phase 3 — Deep Learning for High-Value Applications: Deploy full deep learning architectures for the highest-value VM applications — typically final test prediction models that span the entire process flow. At this stage, you have abundant training data and the accuracy gains justify the complexity.

The VM model landscape is not about choosing the most advanced technology — it is about matching the right modeling approach to your specific process characteristics, data availability, and operational requirements. Start practical, measure rigorously, and evolve the architecture as your data maturity grows.

Deploy real-time AI process control with sub-50ms latency on your production line.